Recurrent Training for Pilots: Master Drone Operations

You know the feeling. You fly paid missions every week, you can set up a site quickly, and your standard workflow is smooth. Then one day you stop and ask yourself a harder question: if the aircraft loses a key system halfway through a job, or the client changes the brief on location, or the airspace picture shifts faster than expected, are your reactions still sharp or just familiar?

That gap matters more than most pilots admit. Routine work can make you efficient while subtly making you narrow. You get fast at the flights you always do and rusty at the things you hope never happen.

That’s why recurrent training for pilots matters so much in drone operations. In manned aviation, recurrent training is baked into the profession. In commercial drone work, the rules are far less defined, which leaves a lot of operators sitting somewhere between legal minimums and genuine operational readiness. The operators who close that gap on purpose usually run safer jobs, present better to clients, and spend less time scrambling when something goes wrong.

The Professional Pilot's Blind Spot

A lot of drone pilots confuse smooth operations with deep proficiency. They’re not the same thing.

If you fly the same mission types over and over, your setup, launch, capture, and recovery flow gets polished. That’s useful. But repeated familiarity can hide skill fade in the exact areas that matter under pressure: manual control, emergency decision-making, degraded GPS procedures, airspace judgment, crew communication, and abnormal termination of a mission.

The blind spot usually shows up when a pilot hasn’t practiced a non-routine event in months. They still remember the procedure in theory. They don’t execute it cleanly in real time.

The gap in the rules

For drone professionals, there’s another problem. Manned aircraft pilots operate under clear FAA recurrent training structures, but commercial drone operators under Part 107 don't have an equivalent standardized recurrent training requirement, which creates ambiguity and pushes operators to define their own frameworks voluntarily, as outlined in this overview of recurrent training program expectations.

That lack of clarity frustrates some operators. It should motivate professionals.

When the regulation is light, the market starts sorting operators another way. Clients look for maturity. Prime contractors look for documentation. Internal safety leads look for consistency. The pilot who can show a real training system stands apart from the pilot who says, “I fly all the time, so I’m good.”

What professionals do differently

A strong drone operation treats recurrent training as a business discipline, not a remedial event.

That means:

- Training on a schedule: not only when a checkride, audit, or customer requirement forces it

- Practicing failure modes: not just normal mission profiles

- Documenting outcomes: so training becomes visible, repeatable, and defensible

- Using training to shape standards: especially when the regulation leaves room for interpretation

Practical rule: If a skill only appears in your ops manual and never in your training cycle, it won’t hold up well under stress.

The opportunity is straightforward. Build the standard you wish the industry had already made mandatory, then use it to run tighter operations than your competitors.

Beyond Currency What Recurrent Training Really Means

A pilot can be current and still not be ready.

That’s the core distinction many operators miss. Currency means you’ve met the requirement that allows you to keep operating. Proficiency means you can handle the mission, the edge cases, and the surprises without your performance falling apart. Recurrent training for pilots is about proficiency.

A useful comparison is music. A professional musician doesn’t practice scales because they’ve forgotten how to play. They practice scales because fundamentals decay, bad habits creep in, and performance under pressure depends on repetition that is deliberate, not casual.

Flying works the same way.

Hours alone don't tell you much

One of the most important findings from the industry side of aviation is that structured, recent training matters more than raw time accumulation. A thorough Pilot Source Study found that pilots with 1,500 flight hours performed worse in airline training programs than pilots with fewer, more structured hours, and the study’s principal researcher said “total hours are not determinative” of proficiency in this context, as detailed by the Regional Airline Association’s summary of the Pilot Source Study.

That should land hard with drone operators. Plenty of pilots assume volume equals readiness. It doesn’t. Repetition of the same easy task isn’t the same as structured development.

What recurrent training actually does

Done properly, recurrent training sharpens three things at once:

- Recall under pressure: emergency and abnormal actions become easier to access quickly

- Decision quality: pilots get practice making trade-offs before they face them on live jobs

- Confidence with boundaries: pilots become better at knowing when to continue, adapt, or stop

That’s why even manned aviation’s baseline expectations matter as a mindset reference. If you want a simple outside benchmark for how aviation treats ongoing proficiency, DuBois Aviation’s guide to FAR 61.56 training expectations is a useful reminder that legal signoff and actual readiness are related, but not identical.

What doesn't work

A weak recurrent program usually has one of these problems:

| Approach | Why it fails |

|---|---|

| Only classroom refreshers | Knowledge stays abstract and doesn’t test execution |

| Only logging more missions | Familiar routes and profiles mask blind spots |

| Only annual review | Too much drift happens between events |

| No debrief standard | Pilots repeat mistakes because nobody captures them |

The best recurrent training doesn't ask, “Did the pilot fly recently?” It asks, “Can the pilot still perform when the mission stops being routine?”

If you want better outcomes, stop treating training as a calendar event. Treat it as controlled exposure to risk, complexity, and judgment.

Navigating Drone Pilot Training Regulations

The regulation question comes up fast once you try to formalize training. Operators want a clear answer to a simple question: what’s required, and what’s just smart practice?

For drone teams, the answer depends heavily on the kind of work being done, the operating model, and whether your missions sit entirely inside standard Part 107 work or move into more complex frameworks.

Part 107 and the practical minimum

Most commercial drone operators start with Part 107. That gives you the legal baseline, but it doesn’t give you a complete recurrent training system. The regulation tells you how to remain qualified. It does not build your company standard, your emergency drill cycle, or your competency checks.

If you need a clean refresher on that piece, Dronedesk’s guide to the Part 107 recurrent test is a practical reference point for what the baseline requirement looks like in day-to-day operations.

For a lot of teams, that baseline becomes the ceiling. That’s the mistake.

Why Part 135 is useful as a benchmark

Most drone operators won’t be running under the same recurrent framework as crewed charter operators. But Part 135 is still useful because it shows what serious compliance administration looks like when the regulator expects recurring checks to be tightly managed.

Under Part 135, operators deal with a 12-month general recurrent training cycle and a 6-month instrument proficiency check cycle, creating real administrative overhead for each pilot and requiring robust tracking systems, as explained in this breakdown of recurrent training timelines and lookback periods.

That’s not a drone rule. It is a strong lesson.

If you manage several pilots, multiple aircraft, different payloads, and varied client requirements, you already know how easy it is for one training item to slip. Add subcontractors, remote crews, or specialized mission sets and the spreadsheet starts to crack.

Compliance isn't just a legal issue

A lot of operators frame training records as something they’ll sort out later if a client asks. In practice, records shape three separate outcomes:

- Audit readiness: Can you show what each pilot was trained on and when?

- Operational consistency: Do your crews perform the same way across sites and contracts?

- Risk defense: If there’s an incident, can you show a disciplined training process?

For teams working with public sector buyers or prime contractors, even basic FAA literacy can matter in procurement and vendor review conversations. If that world intersects with your operation, this plain-English guide to Federal contracting FAA explained helps connect the regulator’s role to the broader contracting environment.

A workable rule set for drone teams

Because the drone side lacks one standardized recurrent schedule, most professional operators need to define one internally. Good internal policy usually covers:

- Knowledge refreshers tied to regulation, airspace, and operational updates

- Hands-on checks for manual flight, contingency handling, and aircraft-specific procedures

- Mission-specific reviews for inspection, mapping, media, or BVLOS-adjacent complexity where relevant

- Clear recordkeeping so the program survives staff changes and customer scrutiny

If the regulation is vague, your internal standard has to be clearer, not looser.

The operators who handle this well don’t wait for the FAA to tell them every interval and every scenario. They borrow the discipline from manned aviation and scale it to drone reality.

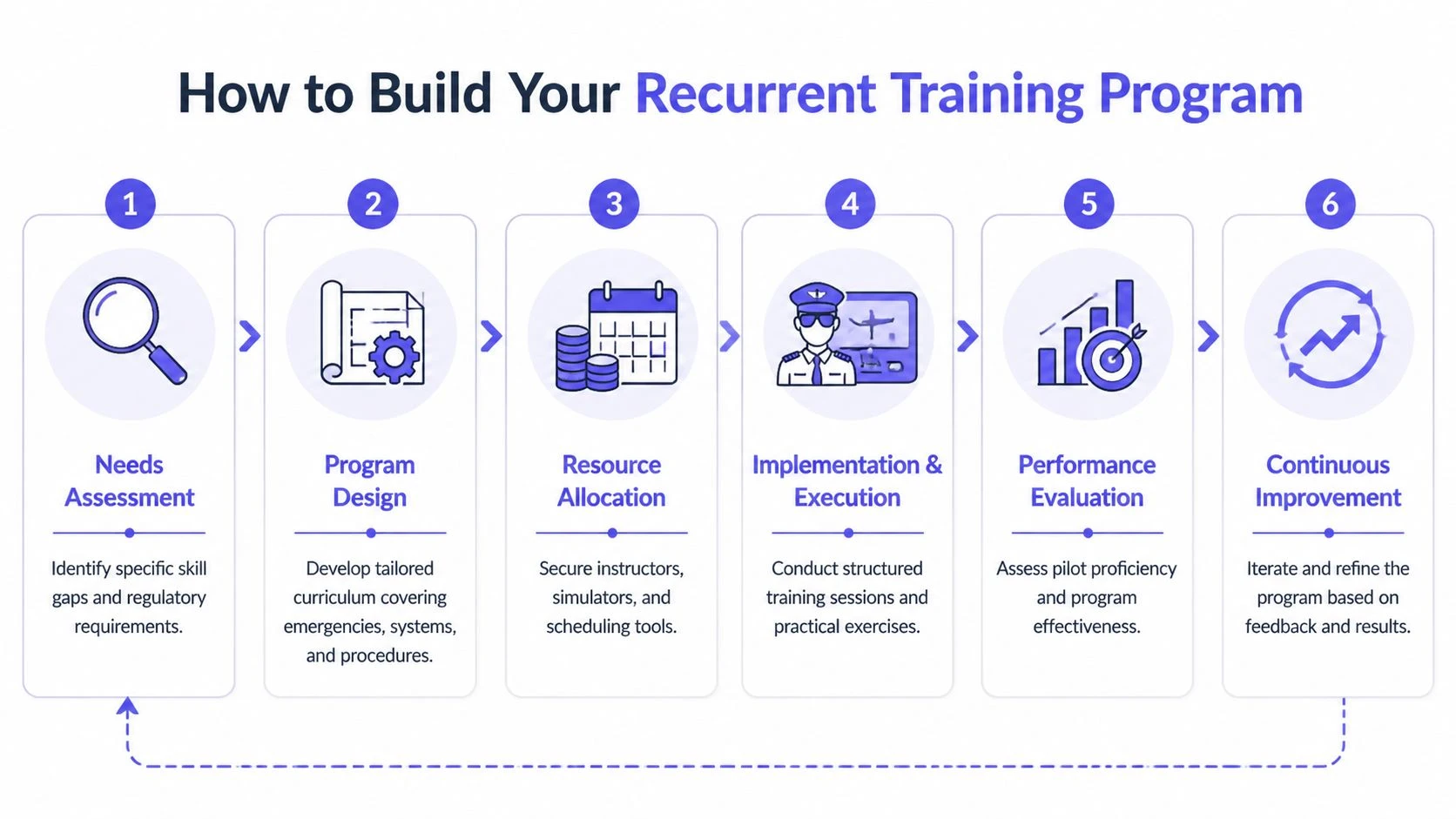

How to Build Your Recurrent Training Program

The best training programs are built backward from risk. Not from what’s easy to schedule, not from what the team happens to enjoy, and not from whatever the last instructor preferred.

That’s where Competency-Based Training and Assessment, or CBTA, earns its place. Modern recurrent training is built on CBTA principles, with proficiency validated through objective performance measures. For drone operators, that includes standards such as UAS Recurrent Pilot Training requiring 16 hours over 2 days and completion of NIST Aerial Tests at level 3 (Open) and level 4 (Obstructed), according to Boeing’s recurrent training framework overview.

That matters because it shifts the question from “Did we run a training day?” to “What can this pilot demonstrate?”

Start with your operation, not a generic syllabus

A survey pilot, a roof inspection team, and a media crew don’t carry the same operational risks. Their recurrent training shouldn’t be identical.

Build your program by looking at:

- Aircraft mix: DJI Mavic, Matrice, enterprise payloads, FPV platforms, or fixed-wing systems all change the training need

- Mission profile: corridor work, asset inspection, filming near people, confined-area launches, or recurring construction progress flights

- Pilot experience spread: solo owner-operator teams train differently than mixed-experience crews

- Client and contract pressure points: documentation, safety brief quality, data integrity, and site coordination often matter as much as stick skills

A generic annual refresher sounds organized. It usually misses the actual hazards.

Use three training pillars

A practical recurrent training program for pilots in drone operations should cover three pillars.

Knowledge

This is the ground side. Regulations, airspace interpretation, weather decision-making, aircraft limitations, battery handling, human factors, and company procedures all belong here.

Keep it short and specific. Nobody needs long slides that restate material they can read elsewhere.

Focus on questions like:

- What airspace mistakes have shown up recently?

- Which emergency procedures are pilots least likely to recall cleanly?

- What changed in our internal procedures, checklists, or client requirements?

- Which aircraft-specific settings create the most preventable errors?

Skills

Many teams underinvest in this regard. Skills work should include manual handling, loss-of-automation response, degraded navigation practice where safe and appropriate, precision takeoff and landing, and payload setup discipline.

If you want objective benchmarks, the NIST lane concept is useful because it forces measurable performance, not vague impressions. Even if you don’t formally run the same testing environment every time, borrowing that mindset improves consistency.

Scenarios

Scenario work is where pilots learn to think instead of recite. Build short drills around realistic problems:

- Site briefing changes after arrival

- Wind or visibility trend requires mission redesign

- Loss of primary landing zone

- Crew communication failure

- Remote pilot and visual observer disagreement

- Battery state, payload issue, or airspace conflict forcing an abort

Field note: Scenarios should be inconvenient, not theatrical. The goal is to test judgment that resembles an actual job.

Pick a cadence your team will actually maintain

A program that looks perfect on paper but never gets completed is worthless.

For most drone teams, a workable structure includes a mix of frequent short reviews and less frequent deeper evaluations. The exact cadence depends on mission complexity and crew size, but the pattern should be consistent enough that nobody wonders what happens next.

A useful internal cycle often includes:

- Regular knowledge updates: brief sessions tied to procedure or regulatory changes

- Periodic practical checks: focused flight drills rather than broad “training days”

- Scenario sessions: tabletop or field-based exercises for unusual events

- Annual program review: not to re-teach everything, but to inspect weak points and update standards

Score performance in plain language

You don’t need an elaborate grading philosophy to start. You do need consistency.

Use a simple rubric such as:

| Competency area | Standard |

|---|---|

| Pre-mission planning | Complete, accurate, and aligned with site conditions |

| Aircraft handling | Controlled, smooth, and within defined tolerances |

| Emergency response | Prompt, correct, and calm |

| Crew coordination | Clear communication and role discipline |

| Judgment | Stops, adapts, or continues for sound operational reasons |

Avoid pass-fail thinking too early. A pilot may be operationally safe while still needing focused improvement in one area.

Write it into your operating system

Training falls apart when it lives only in someone’s memory. Put it into the same controlled documentation that governs the rest of the operation. If your manuals are overdue for structure, this template for an operations manual is a good starting point for turning informal practice into a documented standard.

That’s the difference between a company that trains and a company that hopes its people stay sharp.

Measuring the ROI of Continuous Training

Owners and operations leads eventually ask the same question. Is this worth the time and cost?

That’s a fair question, especially in small drone businesses where the same person may be pilot, scheduler, maintenance lead, sales contact, and invoice chaser. The challenge is that the drone sector still lacks clear hard numbers on training return. There is little data on the specific ROI of recurrent training for drone operations, even though pilot error is a leading cause of accidents, and quantifying benefits such as insurance premium reductions or accident prevention savings remains an important but underexplored part of the case, as noted in this discussion of recurrent training ROI gaps.

That means you need to build the business case from your own operation.

Where the return usually shows up first

The first gains are often operational, not financial on a spreadsheet.

You’ll usually notice recurrent training paying back through:

- Fewer preventable mistakes: missed checklist items, rushed launches, poor site setups

- Cleaner client delivery: fewer mission interruptions and fewer avoidable re-flights

- Better decision discipline: pilots become more willing to stop a mission for the right reason

- Stronger credibility: clients and partners trust teams that can explain their standards clearly

These benefits are real even when you can’t assign a neat number to them.

Use internal KPIs instead of waiting for industry data

If the market doesn’t give you ROI benchmarks, create your own. Track indicators that training should influence over time.

A simple internal scorecard can include:

| KPI | What it tells you |

|---|---|

| Near-miss reports | Whether crews are identifying and reducing risky patterns |

| Mission disruptions | How often preventable operational errors break workflow |

| Retraining triggers | Which competencies repeatedly need correction |

| Client quality issues | Whether execution problems affect deliverables |

| Pilot self-assessment | Where confidence and hesitation are changing |

Don’t use these metrics to punish pilots. Use them to identify where the system is weak.

The strongest ROI argument

The most persuasive case for recurrent training is usually not “this will save money immediately.” It’s “this will reduce preventable exposure while helping us win and keep better work.”

That matters in drone services because customers often can’t directly judge piloting skill. They judge signals of professionalism instead:

- the quality of your planning,

- the consistency of your crew,

- the confidence of your site brief,

- the clarity of your records,

- and how you respond when conditions change.

Training becomes visible long before an incident does. Clients notice the discipline even when they can't name it.

When you frame recurrent training for pilots as a quality control system, not just a safety item, the ROI discussion gets much easier.

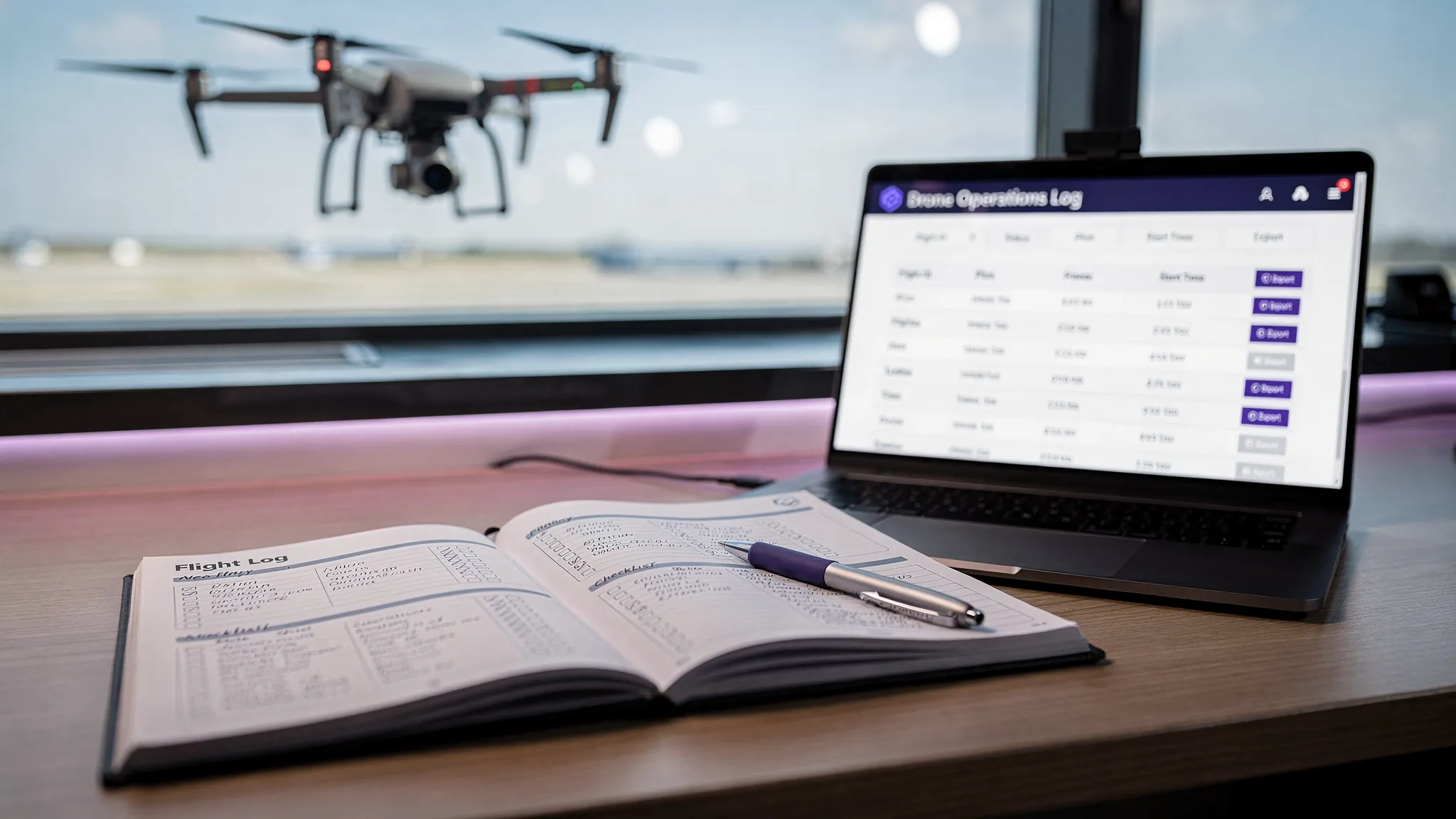

Streamlining Training with an Operations Platform

Training programs usually fail in administration before they fail in design.

The curriculum might be solid. The pilots may even buy into it. But then someone has to track expiries, record practical checks, attach evidence, keep aircraft-specific notes straight, and make sure the right training happened before the next contract starts. That’s where unmanaged systems drift.

What breaks when teams use scattered tools

A lot of operators start with a mix of spreadsheets, cloud folders, calendars, notes apps, and memory. That works for a while, especially in a small owner-led business. Then scale arrives.

The cracks usually look familiar:

- Training dates get missed: because they sit in one person’s calendar

- Records become incomplete: because practical checks happen in the field and never get written up properly

- Standards drift across crews: because each team lead tracks things slightly differently

- Audit prep becomes painful: because documents live in several systems with no single operational trail

The problem isn’t effort. It’s fragmentation.

What a dedicated platform should handle

For recurrent training to stay useful, it has to live close to daily operations. The system should connect people, aircraft, jobs, records, and compliance tasks instead of treating training as a separate admin project.

A capable operations platform should let you:

- Schedule training events against pilots, aircraft, or roles

- Store certificates and competency records in one place

- Link training outcomes to flight operations so performance trends are visible

- Trigger reminders before skills, qualifications, or internal checks lapse

- Export reports quickly when clients, insurers, or internal stakeholders ask questions

At this point, software stops being a convenience and starts becoming part of your safety system.

Why this matters more as the team grows

Solo operators can get away with informal memory longer than they should. Teams can’t.

The moment you manage several pilots, different aircraft, site-specific workflows, and customer requirements, you need a system that reduces ambiguity. The point isn’t to create bureaucracy. The point is to stop wasting operational attention on avoidable admin.

If you’re comparing options for centralizing scheduling, records, fleet detail, and compliance oversight, this guide to the best drone operations platform is a practical place to assess what a purpose-built system should do.

Good operations software doesn't replace training discipline. It makes that discipline repeatable.

That's the ultimate payoff. The program stops depending on who remembers what. It starts functioning as part of the company.

From Required Chore to Competitive Edge

The drone industry is still young enough that many operators can get by on skill, hustle, and light documentation. That won’t hold forever.

The businesses that last usually build standards before the market forces them to. Recurrent training for pilots is one of those standards. It closes the gap between legal minimums and real-world readiness. It reduces the odds that a routine mission turns into an avoidable problem. It gives clients and partners a clearer reason to trust your operation.

It also changes how your team thinks. Pilots stop treating proficiency as something they earned in the past and start treating it as something they maintain on purpose. That mindset shows up in planning, execution, debriefing, and decision-making.

For drone operators, the lack of a single mandated recurrent framework isn't a reason to wait. It's a reason to lead. Borrow the discipline that manned aviation has already proven, adapt it to your aircraft and missions, and document it well enough that your operation can scale without losing control.

The distinguishing factor isn’t whether you can fly. Plenty of people can fly.

The differentiator is whether your operation can stay sharp, consistent, and defensible over time.

Frequently Asked Questions About Recurrent Training

Most operators don’t struggle with the idea of training. They struggle with implementation. These are the questions that come up once you try to make recurrent training part of normal operations instead of an occasional reset.

The practical questions operators ask

Some concerns are about time. Others are about cost, records, or whether a small team even needs a formal approach. The short answer is that the smaller the team, the more important a simple system becomes, because there’s less redundancy when one pilot makes a mistake.

A small team doesn't need a smaller standard. It needs a simpler one that actually gets used.

FAQ

| Question | Answer |

|---|---|

| Do solo drone operators really need a recurrent training program? | Yes. Solo operators often have the most to gain because there’s no second pilot, training manager, or chief pilot catching drift in technique or judgment. Keep it lean. Use a documented cycle that includes knowledge refreshers, practical skill checks, and scenario review tied to the jobs you actually fly. |

| How formal does the documentation need to be? | Formal enough that another competent person could review it and understand what was trained, who completed it, when it happened, and what follow-up was required. It doesn’t need to look like airline paperwork. It does need to be consistent. |

| Should training be different for media, inspection, and survey teams? | Yes. The structure can stay the same, but the content should reflect the real mission profile. Media crews may need more work on dynamic site changes and close coordination. Inspection crews may need stronger emphasis on obstacle environments, client safety boundaries, and repeatable pre-mission setup. Survey teams often need tighter discipline around consistency and procedural accuracy. |

Three implementation points that solve most problems

Keep the program small enough to survive busy months

A common failure is overdesign. Operators build a training framework that looks excellent in a document and collapses under real workload.

Start with a short recurring cycle and a handful of core competencies. Add complexity only after the team is consistently completing the basics.

Separate checking from coaching

Pilots need both evaluation and development, but not always at the same moment. If every training event feels like a test, people hide weaknesses. If every event is only coaching, standards get fuzzy.

Use some sessions to assess against a defined standard. Use others to practice and correct without pressure.

Debrief the system, not just the pilot

If several pilots make the same mistake, the issue may not be pilot quality. It may be checklist design, weak briefing habits, poor aircraft setup procedures, or unclear role definitions.

That’s one reason recurrent training is so valuable. It exposes operational design flaws you won’t see by looking only at incident reports.

What a strong starting point looks like

If you need a simple model, build around these questions:

- What skills are most likely to fade if we don’t practice them?

- What errors would hurt us most if they happened on a client job?

- Which mission types create the most operational pressure?

- How will we record completion and follow-up?

Those four questions will get you further than downloading a generic training pack and hoping it fits.

For most professional teams, the goal isn’t to create a large academy-style program. It’s to create a durable operating habit. When that habit exists, standards become easier to maintain, new pilots come online more cleanly, and customers see a more mature business.

Dronedesk helps drone operators turn recurrent training from a scattered admin task into a controlled part of daily operations. If you want one place to manage pilots, aircraft, documents, flight records, and compliance workflows, take a look at Dronedesk. It’s built for professional drone teams that need safer, more efficient operations without adding unnecessary overhead.

What Makes a Great Drone Platform for Commercial Teams →

What Makes a Great Drone Platform for Commercial Teams → Your Guide to the Ultra Light Aircraft Licence in 2026 →

Your Guide to the Ultra Light Aircraft Licence in 2026 → UAVs for Sale: A Professional Buyer's Guide for 2026 →

UAVs for Sale: A Professional Buyer's Guide for 2026 → UAS Fleet Management Tips for Safer Multi-Pilot Operations →

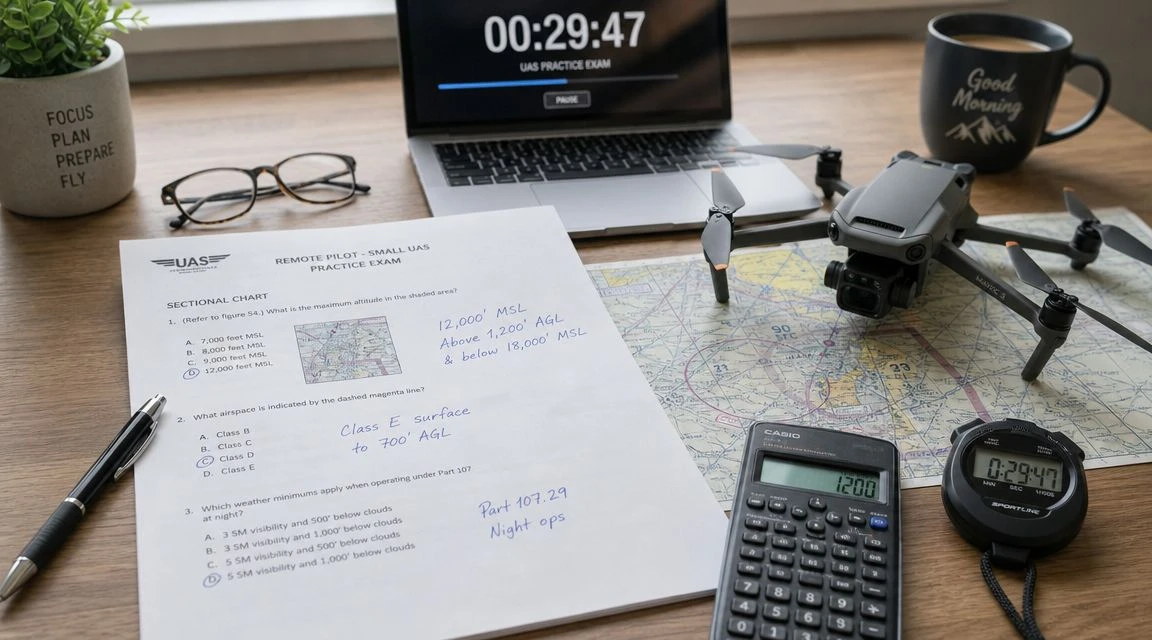

UAS Fleet Management Tips for Safer Multi-Pilot Operations → UAS Practice Exam: Your 2026 Part 107 Study Plan →

UAS Practice Exam: Your 2026 Part 107 Study Plan → How Survey Firms Use Drone Survey Software to Scale →

How Survey Firms Use Drone Survey Software to Scale → Drone Logbook vs Flight Log: What Operators Should Keep →

Drone Logbook vs Flight Log: What Operators Should Keep → Mastering Digital Aeronautical Flight Information File →

Mastering Digital Aeronautical Flight Information File → How to Set Up a Drone Pilot Logbook That Stands Up to Audits →

How to Set Up a Drone Pilot Logbook That Stands Up to Audits → The History of UAVs: From WWI Prototypes to Modern Drones →

The History of UAVs: From WWI Prototypes to Modern Drones →