8 Infrastructure Monitoring Best Practices for 2025

In the world of professional drone operations, success is measured not just by flight hours logged or stunning aerial data captured, but by the seamless reliability of the digital backbone supporting every mission. From flight planning platforms and data processing servers to client management portals and real-time data streams, the underlying IT infrastructure is the unsung hero. A single point of failure-a crashed server during a photogrammetry upload or a lagging database-can ground an entire fleet, delay critical projects, and erode hard-won client trust. This is where mastering modern infrastructure monitoring becomes a non-negotiable competitive advantage.

Moving beyond simple 'up/down' server checks is critical. A robust monitoring strategy provides deep, actionable insights that prevent downtime before it ever impacts your operations. It allows you to identify performance bottlenecks in your data processing pipeline, anticipate capacity needs as your fleet grows, and ensure the applications your team relies on are always responsive. Implementing a mature set of infrastructure monitoring best practices transforms your IT from a potential liability into a strategic asset that enables scalability and reliability.

This guide moves straight to the point, outlining eight essential best practices tailored for the unique demands of drone operations. You will learn how to implement comprehensive multi-layer monitoring, configure intelligent alerts that matter, and leverage automation to maintain system health. Each point is designed to provide the stability and performance required to manage complex drone workflows effectively, ensuring your technology never becomes a bottleneck to your growth. We will cover specific, actionable techniques to build a resilient and high-performing infrastructure that underpins every successful flight.

1. Comprehensive Multi-Layer Monitoring

Effective infrastructure monitoring isn't about watching a single component; it's about achieving a holistic, 360-degree view of your entire operational stack. This is the core principle behind comprehensive multi-layer monitoring, a foundational best practice for any serious drone operations platform. It involves implementing surveillance across every critical layer, from the physical hardware up to the end-user's interaction with your flight planning software.

This approach moves beyond simple server health checks. Instead, it creates a correlated understanding of performance, connecting CPU utilization on a cloud server, for instance, to the processing speed of photogrammetry data in your application, and ultimately, to the load times a pilot experiences in the field. By monitoring hardware (servers, storage), the operating system, network traffic, application performance (APM), and user experience, you can pinpoint the root cause of issues with precision, rather than chasing symptoms across siloed systems.

Why It's a Top Best Practice

In drone operations, a failure at any layer can have significant consequences, from a failed data upload to a critical flight plan not syncing. A multi-layer strategy ensures complete visibility. For example, if drone pilots report slow video processing, this approach allows you to see if the issue stems from an overloaded database (application layer), saturated network bandwidth (network layer), or insufficient server memory (hardware layer). This level of insight is crucial for maintaining reliability and performance.

Key Insight: Comprehensive multi-layer monitoring transforms your approach from reactive problem-solving to proactive performance optimization, ensuring every part of your drone data pipeline is healthy and efficient.

How to Implement Multi-Layer Monitoring

Adopting this strategy requires a structured approach. Start by identifying your most critical services, such as flight log ingestion or mission planning, and build out your monitoring from there.

- Standardize Metrics: Use consistent naming conventions (e.g.,

app.flightplan.upload.latency) across all layers to simplify correlation and analysis. - Visualize Dependencies: Implement service maps to create a visual representation of how different components, like your user authentication service and your drone telemetry database, interact.

- Establish Ownership: Assign clear responsibility for each layer to specific teams or individuals. The cloud infrastructure team owns hardware monitoring, while the software development team owns application performance monitoring.

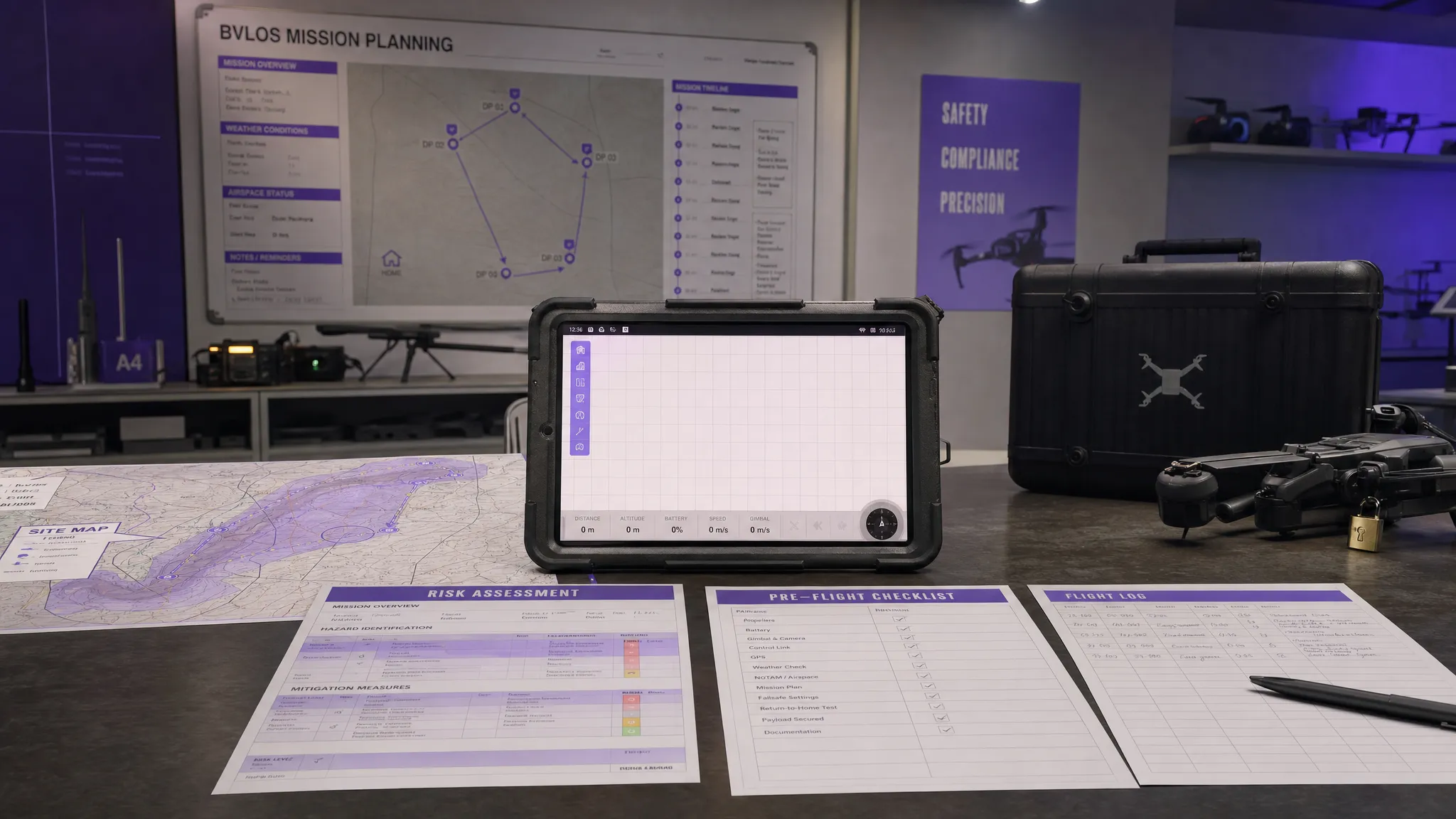

This infographic illustrates the interconnected nature of multi-layer monitoring, showing how data from hardware, applications, and user experience combine to form a complete picture of system health.

The visualization highlights that a truly comprehensive view is only achieved when data points from distinct infrastructure layers are analyzed together, revealing dependencies that would otherwise be invisible.

2. Proactive Alerting with Intelligent Thresholds

Effective infrastructure monitoring isn't just about collecting data; it’s about acting on it before minor issues escalate into major outages. Proactive alerting with intelligent thresholds moves beyond simple, static alerts (e.g., "CPU is over 90%") to a more sophisticated, context-aware system. It uses dynamic baselines, anomaly detection, and predictive analytics to notify teams of potential problems before they impact drone pilots or data processing pipelines.

This approach learns what "normal" looks like for your system during different times, such as peak flight hours versus overnight data processing. For instance, instead of a fixed alert for high database queries, an intelligent system would recognize that a spike is normal during a mass data upload from a fleet but anomalous at 3 AM. By understanding context, it dramatically reduces alert fatigue from false positives and ensures that when an alert does fire, it warrants immediate attention.

Why It's a Top Best Practice

In drone operations, performance degradation can be just as critical as a full outage. A lagging mission sync or a slow photogrammetry job can delay critical projects and frustrate users. Proactive alerting helps catch the subtle signs of impending failure. For example, a system might detect a gradual increase in mission data processing latency over several days. This could indicate an impending storage bottleneck, allowing your team to provision more resources long before pilots experience critical failures when uploading their flight logs. This is a core component of modern infrastructure monitoring best practices, shifting the focus from reaction to prevention.

Key Insight: Intelligent alerting transforms your monitoring from a noisy, reactive alarm system into a strategic, proactive early-warning system that respects your engineers' time and focus.

How to Implement Proactive Alerting

Implementing this requires a shift from static values to dynamic analysis. Start by analyzing historical data to understand your system's natural rhythms.

- Establish Dynamic Baselines: Use monitoring tools to analyze performance metrics over extended periods (e.g., weeks or months) to establish what normal operational patterns look like for different times and days.

- Use Composite Alerts: Combine multiple related metrics into a single, smarter alert. For example, trigger an alert only if high CPU usage, increased memory consumption, and rising API latency occur simultaneously.

- Implement Smart Alert Routing: Configure alerts to be sent directly to the responsible team. Database-related warnings should go to the data team, while API gateway issues should route to the application developers.

- Document Alert Runbooks: For every critical alert, create a simple, step-by-step guide (a runbook) that outlines the initial diagnostic and resolution steps, ensuring a consistent and rapid response.

3. Real-Time Dashboards and Visualization

Data without context is just noise. Real-time dashboards and visualization transform raw monitoring metrics into actionable intelligence, providing an immediate, intuitive view of your entire drone operations infrastructure. This practice involves consolidating key performance indicators (KPIs) from multiple sources into a single, cohesive visual interface, using charts, graphs, and status indicators to communicate system health.

This approach translates complex data streams, such as drone telemetry ingestion rates or mission planning API response times, into easily digestible formats. Instead of parsing thousands of log lines, an operations manager can glance at a dashboard and instantly understand the status of critical services. Effective visualization, as popularized by tools like Grafana and Tableau, allows teams to spot trends, anomalies, and correlations that would be impossible to detect in raw data alone.

Why It's a Top Best Practice

In the fast-paced world of drone operations, mean time to resolution (MTTR) is critical. A real-time dashboard is your first line of defense, enabling instant recognition of issues. When pilots in the field report that flight plans are failing to sync, a well-designed dashboard can immediately show a spike in database errors or a drop in network throughput, guiding the engineering team directly to the source of the problem. This rapid diagnostic capability is a core component of modern infrastructure monitoring best practices, reducing downtime and protecting operational integrity.

Key Insight: Effective dashboards democratize data, enabling everyone from engineers to non-technical stakeholders to understand system performance at a glance and make faster, more informed decisions.

How to Implement Real-Time Dashboards

Building useful dashboards is both an art and a science. The goal is to present the most critical information clearly, without overwhelming the user. Follow these principles for maximum impact:

- Follow the 5-Second Rule: A user should be able to understand the overall health of the system within five seconds of looking at the dashboard. Prioritize high-level status indicators (e.g., green/yellow/red) on the main view.

- Create Role-Specific Views: Your platform reliability team needs a different view than your flight operations manager. Create tailored dashboards that display the most relevant metrics for each role, such as server CPU for engineers and flight log processing latency for ops.

- Implement Progressive Disclosure: Design dashboards to provide an overview first, with the ability to drill down into more granular data on demand. A top-level chart might show overall API error rates, which can be clicked to reveal error rates for specific endpoints.

- Visualize SLOs: Display your Service Level Objective (SLO) targets directly alongside the current performance metrics. This provides immediate context, showing not just how the system is performing, but how it is performing against its promises.

4. Automated Response and Self-Healing Systems

Advanced infrastructure monitoring goes beyond simply alerting humans to problems; it actively resolves them. This is the principle behind automated response and self-healing systems, which use monitoring data to trigger predefined corrective actions without manual intervention. This practice transforms monitoring from a passive reporting tool into an active participant in maintaining system stability.

This approach involves creating rules that, when a specific metric threshold is breached or an error pattern is detected, automatically execute a script or command. For a drone platform, this could mean restarting a failed video transcoding service, scaling up cloud resources to handle a sudden influx of flight log uploads, or automatically failing over to a backup database. Systems like Kubernetes are designed with this concept at their core, automatically replacing failed pods to ensure application availability.

Why It's a Top Best Practice

In drone operations, where data ingestion and processing are often time-sensitive, minimizing downtime is critical. A self-healing system can resolve a critical service outage in seconds, whereas manual intervention could take minutes or hours, especially if an issue occurs overnight. This rapid, automated remediation directly improves reliability and ensures that pilots in the field can consistently access mission plans and upload data without interruption. It’s a key step in building a truly resilient infrastructure.

Key Insight: Automated response turns your monitoring system into a first responder, reducing mean time to recovery (MTTR) and freeing up engineering teams to focus on innovation rather than firefighting.

How to Implement Automated Response

Implementing self-healing systems requires careful planning to ensure they operate safely and effectively. The goal is to start small and build confidence in your automation.

- Start with Simple Fixes: Begin by automating well-understood, low-risk actions. For example, create an automation rule that clears a temporary cache directory when disk space on a data processing server falls below 10%.

- Log Everything: Maintain a detailed, immutable log of every action taken by the automated system. This is crucial for auditing, debugging, and understanding how the system behaves under different conditions.

- Include Manual Overrides: Ensure that for every automated action, there is a clear and accessible "kill switch" for a human operator to use. This prevents runaway processes from causing cascading failures.

- Test in Staging: Before deploying a new automation rule to production, rigorously test its trigger conditions and remediation script in a staging environment that mirrors your live infrastructure.

By gradually introducing these automated workflows, you can significantly enhance your platform's resilience. As you can learn more about how AI and automation are revolutionizing drone operations, this practice is a cornerstone of modern operational efficiency.

5. Centralized Log Management and Analysis

Effective infrastructure monitoring goes beyond metrics and dashboards; it requires a deep dive into the events occurring across your system. Centralized log management is the practice of aggregating log data from every component of your drone operations platform, from application servers to network devices, into a single, searchable system. This creates a unified source of truth for all system activities.

This approach transforms raw, distributed log files into a powerful, structured dataset. Instead of connecting to individual servers to tail log files during an incident, your team can query a centralized platform to correlate events across the entire stack. For instance, you can trace a failed flight plan upload from the initial user click in the web application, through the API gateway, down to a specific database error, all from one interface.

Why It's a Top Best Practice

In a complex drone ecosystem, issues are rarely isolated. A failed drone data sync could be caused by an application error, a network timeout, or a database constraint. Centralized logging allows you to see the complete story. With all logs in one place, you can reconstruct the sequence of events leading to a failure, perform security audits by searching for anomalous access patterns, and generate compliance reports with ease. This is one of the most critical infrastructure monitoring best practices for rapid troubleshooting and deep system understanding.

Key Insight: Centralized logging converts chaotic, distributed event data into an organized, searchable intelligence asset, dramatically reducing the time it takes to diagnose and resolve issues.

How to Implement Centralized Log Management

Implementing a centralized logging system requires a deliberate strategy to collect, process, and analyze data effectively. A great starting point is to focus on logs from your most critical services, like user authentication or flight data processing.

- Implement Structured Logging: Standardize your log format across all applications using a format like JSON. This makes logs machine-readable and easy to parse, filter, and analyze.

- Use Log Levels Appropriately: Enforce consistent use of log levels (e.g.,

INFO,WARN,ERROR). This allows you to quickly filter out noise and focus on critical errors during an investigation. - Establish Retention Policies: Define clear log rotation and retention policies based on operational needs and compliance requirements. For example, retain debug logs for 7 days but audit logs for one year.

- Create Standardized Dashboards: Build pre-configured dashboards in your logging tool (like the ELK Stack or Splunk) to visualize key events, such as login failures, application error rates, and system warnings.

6. Performance Baseline Establishment and Trending

Effective infrastructure monitoring isn't just about spotting failures; it's about understanding what "normal" looks like for your platform. This is the principle behind establishing performance baselines, a critical practice that involves capturing and analyzing historical data to define the typical behavior of your systems. By knowing your normal, you can instantly recognize the abnormal.

This approach moves beyond setting static, arbitrary thresholds for alerts. Instead, it creates a dynamic understanding of performance, connecting, for example, the typical Monday morning surge in flight plan uploads to expected CPU and database load. By monitoring key metrics like API response times, data ingestion rates, and user session durations over weeks or months, you can distinguish between a genuine performance degradation and a predictable peak caused by a scheduled marketing campaign.

Why It's a Top Best Practice

In drone operations, performance directly impacts mission success. A slow interface can delay a critical survey, and a lagging data pipeline can frustrate clients waiting for deliverables. Establishing baselines allows you to detect subtle, slow-burning issues before they escalate. For instance, if the average processing time for a 100-image photogrammetry set slowly increases from 15 minutes to 25 minutes over three months, a baseline will flag this trend as an anomaly, prompting an investigation into potential database or storage bottlenecks.

Key Insight: Performance baselining transforms monitoring from a system of loud, often-ignored alarms into an intelligent early warning system, enabling you to address performance degradation before it impacts your pilots and clients.

How to Implement Performance Baselines

Adopting this strategy requires a data-driven, systematic approach. Begin by identifying key performance indicators (KPIs) for your most critical workflows, like mission synchronization or flight data analysis. The insights gained are invaluable and closely related to the principles of effective flight data monitoring.

- Collect Sufficient Data: Gather at least 30 to 90 days of performance metrics before setting your initial baselines to ensure they accurately reflect business cycles and user behavior.

- Account for Seasonality: Your baselines should accommodate predictable variations, such as higher activity during peak flying seasons or lower usage on weekends. Use models that understand daily, weekly, and seasonal patterns.

- Segment Your Baselines: Create separate baselines for different components (e.g., API gateway vs. video transcoding service) and user tiers (e.g., enterprise clients vs. individual pilots), as their "normal" performance profiles will differ significantly.

- Review and Evolve: Baselines are not static. Regularly review and adjust them after major software updates, infrastructure changes, or shifts in user growth to ensure they remain relevant and accurate.

7. Distributed Tracing and Application Performance Monitoring

In modern drone platforms built on microservices, a single user action, like requesting a 3D model render, can trigger a cascade of requests across dozens of individual services. Distributed tracing provides a way to follow these requests as they travel through your system, creating a complete, end-to-end narrative of each transaction. Paired with Application Performance Monitoring (APM), it moves beyond isolated metrics to offer deep insights into how your entire application ecosystem is performing.

This practice allows you to visualize the entire lifecycle of a request, from the moment a drone pilot clicks "upload flight data" to when the data is processed, stored, and confirmed. By tracing these paths, you can identify which specific service is causing a delay or an error, understand inter-service dependencies, and pinpoint performance bottlenecks that would otherwise be hidden within the complexity of a distributed architecture. It’s like having a GPS for your data, tracking its journey and reporting on every stop along the way.

Why It's a Top Best Practice

For complex drone operations platforms, understanding system behavior is impossible without tracing. When a user reports that generating an aerial survey report is slow, distributed tracing can show you that the bottleneck isn't the user-facing web server, but an overworked image processing microservice three steps down the chain. This granular visibility is essential for debugging, optimizing performance, and ensuring the reliability of critical workflows, making it a cornerstone of modern infrastructure monitoring best practices.

Key Insight: Distributed tracing and APM turn your complex, distributed system from a black box into a transparent, observable network, enabling precise root cause analysis and proactive performance tuning.

How to Implement Distributed Tracing and APM

Integrating tracing requires a strategic approach that starts early and focuses on standardization. Begin by identifying critical user journeys, such as mission planning or data processing, to trace first.

- Standardize Instrumentation: Adopt a single standard like OpenTelemetry across all your services to ensure traces can be correlated consistently, regardless of the programming language or framework.

- Implement Smart Sampling: Tracing every single request can be resource-intensive. Implement sampling strategies (e.g., tracing 10% of requests or all requests that result in an error) to gather valuable insights without creating excessive performance overhead.

- Enrich Traces with Context: Add business-relevant metadata to your traces, such as

drone_id,pilot_id, ormission_type. This context makes it much easier to filter and debug issues affecting specific users or workflows. - Integrate with Logs and Metrics: Link your trace IDs to your logs and metrics. This allows you to jump from a high-level latency spike on a dashboard directly to the specific trace and corresponding logs that reveal the root cause.

8. Infrastructure as Code Integration with Monitoring

Manually configuring monitoring for every new server or service is inefficient, error-prone, and unsustainable in modern, dynamic environments. Integrating monitoring directly into your Infrastructure as Code (IaC) workflows treats your monitoring setup as a core part of your application's architecture, defining and deploying it alongside the infrastructure it is meant to observe. This practice ensures that no resource is deployed without the necessary visibility from day one.

This approach codifies every aspect of your monitoring strategy, from alert thresholds and notification channels to complex dashboards. Using tools like Terraform, AWS CloudFormation, or Ansible, you can create version-controlled, repeatable templates that automatically configure CloudWatch alarms, Datadog dashboards, or Prometheus targets as part of the initial deployment pipeline. This eliminates configuration drift and guarantees that every environment, from development to production, adheres to the same high standard of observability.

Why It's a Top Best Practice

In the context of drone operations, where infrastructure might scale rapidly to process large photogrammetry datasets, IaC integration is critical for maintaining consistent oversight. If a new processing cluster is spun up for a large mapping mission, this practice ensures it is automatically equipped with the right CPU alerts, memory usage dashboards, and failure notifications. This prevents "blind spots" in your infrastructure, which are a common source of undetected failures and performance degradation.

Key Insight: By treating monitoring as code, you embed reliability and observability directly into your deployment lifecycle, transforming monitoring from a manual, reactive task into an automated, proactive discipline.

How to Implement IaC-Driven Monitoring

Integrating monitoring into your IaC practices requires a strategic and modular approach. The goal is to make monitoring a seamless part of every developer's workflow.

- Create Reusable Modules: Develop standardized IaC modules for common monitoring patterns. For example, create a Terraform module that deploys a web server along with pre-configured health checks, latency alerts, and a corresponding dashboard widget.

- Incorporate into Code Reviews: Make monitoring configurations a mandatory part of pull request reviews. This ensures that new features or infrastructure changes include a plan for observability before they are merged into the main branch.

- Use Parameterized Templates: Design your templates to accept variables for different environments. This allows you to set more sensitive alert thresholds for production (e.g., 90% CPU usage) while having more lenient ones for staging (e.g., 95% CPU usage) without duplicating code.

This method aligns perfectly with the principles behind systematic UAV flight planning, where automation and templating are used to ensure consistency and reduce manual error across complex operations.

Best Practices Comparison: 8 Key Infrastructure Monitoring Strategies

| Item | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|---|---|---|---|---|---|

| Comprehensive Multi-Layer Monitoring | High - integration across hardware, OS, apps, network | High - significant data storage and processing | Complete visibility; early issue detection; root cause analysis | Complex infrastructures requiring full-stack insights | Holistic monitoring; enhanced correlation; capacity planning |

| Proactive Alerting with Intelligent Thresholds | Moderate to High - requires tuning and ML models | Moderate - baseline data and ML resources | Reduced alert fatigue; earlier detection; actionable alerts | Environments needing predictive alerts and noise reduction | Context-aware alerts; automated escalation; better signal-to-noise |

| Real-Time Dashboards and Visualization | Moderate - dashboard design and data integration | Moderate - real-time data processing needed | Immediate visibility; faster response; improved communication | Teams needing instant visual insights and status updates | Intuitive visuals; role-specific views; drill-down capabilities |

| Automated Response and Self-Healing Systems | High - complex automation logic and testing | Moderate to High - triggers and remediation actions | Reduced MTTR; 24/7 reliability; lowered operational overhead | Systems where rapid, automated issue resolution is critical | Consistent, fast remediation; improved reliability; cost savings |

| Centralized Log Management and Analysis | Moderate to High - log collection and normalization | High - storage, processing, network bandwidth | Centralized troubleshooting; enhanced security; audit readiness | Environments needing unified log analysis and compliance | Cross-system correlation; structured data; real-time insights |

| Performance Baseline Establishment and Trending | Moderate - requires long-term data collection | Moderate - historical data storage and analysis | Accurate anomaly detection; capacity forecasting; performance trends | Systems benefiting from data-driven planning and anomaly detection | Data-driven decisions; trend analysis; SLA/SLO improvements |

| Distributed Tracing & Application Performance Monitoring | High - instrumentation across distributed systems | High - storage and processing of trace data | Detailed performance visibility; faster root cause analysis | Complex microservices and distributed architectures | End-to-end tracing; dependency mapping; improved UX |

| Infrastructure as Code Integration with Monitoring | Moderate - integration of IaC and monitoring tools | Moderate - deployment automation resources | Consistent, version-controlled monitoring setups | Continuous deployment environments requiring automated monitoring | Consistency; version control; faster provisioning |

Elevating Your Operations with Intelligent Monitoring

Mastering infrastructure monitoring is no longer a peripheral IT task; it is a core strategic competency that directly fuels operational excellence and business growth. Moving beyond a simple, reactive "is it on or off?" approach transforms your technology stack from a potential liability into a powerful, reliable asset. The journey we've outlined through these eight infrastructure monitoring best practices provides a comprehensive roadmap for achieving this transformation, particularly within the demanding world of drone operations management.

By embracing a multi-layered monitoring strategy, you gain a holistic view that connects the health of your cloud servers directly to the performance of your client-facing data delivery portals. This foundational visibility is then sharpened by proactive alerting with intelligent, dynamic thresholds, ensuring your team responds to genuine risks, not just noisy, irrelevant fluctuations. This intelligent approach prevents alert fatigue and keeps your focus on what truly matters: preempting issues before they impact a mission.

From Data Points to Strategic Insights

The true power of a mature monitoring practice lies in its ability to convert raw data into actionable intelligence. This is where real-time dashboards and centralized log management become indispensable. A well-designed dashboard doesn't just display metrics; it tells a story about your operational health, enabling even non-technical stakeholders to understand performance at a glance. Similarly, effective log analysis allows you to pinpoint the root cause of an issue that might otherwise take hours of guesswork, turning a potential crisis into a manageable, documented incident.

This transition from reactive to proactive is further accelerated by two key pillars:

- Performance Baselines: Establishing what "normal" looks like is a critical first step. Without a clear baseline, you cannot effectively identify deviations or predict future capacity needs. This practice is fundamental to scaling your drone operations confidently, knowing you have the resources to handle increased data processing or more concurrent flight missions.

- Automation and Self-Healing: The ultimate goal is to build a resilient system that can anticipate and correct failures on its own. Integrating monitoring with Infrastructure as Code (IaC) and implementing automated response scripts reduces manual intervention, minimizes human error, and drastically shortens recovery times. This frees your team to focus on innovation rather than firefighting.

Building a Resilient Foundation for Growth

Ultimately, implementing these infrastructure monitoring best practices is about building trust. It fosters trust within your team that the tools they rely on will be available and performant. It builds trust with your clients, who depend on you for timely, accurate data delivery and reliable service. For any drone service provider, from a solo operator to a large enterprise, a robustly monitored infrastructure is the bedrock upon which a successful, scalable business is built.

Adopting these strategies ensures you are not just preventing downtime; you are actively engineering a more efficient, secure, and predictable operational environment. You are creating a system where performance is not an afterthought but a measured, managed, and continuously optimized component of your service delivery. This strategic investment in visibility and control is what separates thriving, future-ready operations from those constantly hampered by technical debt and unexpected failures. The path to elevating your drone operations begins with intelligent, comprehensive monitoring.

Ready to streamline the rest of your drone operations with the same level of efficiency? While you perfect your technical infrastructure, let Dronedesk manage everything from flight planning and risk assessments to client and team management. Discover how our all-in-one platform can bring order and clarity to your complex workflows by visiting Dronedesk today.

Drone Airspace Map Guide for Safer UK Flight Planning →

Drone Airspace Map Guide for Safer UK Flight Planning → How to Build a Drone Flight Risk Assessment That Works →

How to Build a Drone Flight Risk Assessment That Works → BVLOS Meaning Explained for Commercial Drone Teams →

BVLOS Meaning Explained for Commercial Drone Teams → Drone Mapping Companies: How to Choose the Right Partner →

Drone Mapping Companies: How to Choose the Right Partner → Why Drone Operations Management Software Beats Spreadsheets →

Why Drone Operations Management Software Beats Spreadsheets → How to Build a Drone Business Website That Wins Clients →

How to Build a Drone Business Website That Wins Clients → Drone Safety Checklist for Commercial Flights →

Drone Safety Checklist for Commercial Flights → Drone Risk Assessment Template for Commercial Operations →

Drone Risk Assessment Template for Commercial Operations → Drone Inspections: How to Plan Safer, Faster Site Visits →

Drone Inspections: How to Plan Safer, Faster Site Visits → How Enterprise Drone Teams Standardise Safe Operations →

How Enterprise Drone Teams Standardise Safe Operations →