Fleet Management Dataset: A Guide for Drone Operations

If you're running more than a handful of drones, you probably already know the feeling. One aircraft is due for maintenance, another battery has been cycled hard for weeks, a pilot forgot to log a small incident, and the client wants a clean report that proves the job was profitable. The data exists somewhere, but it's scattered across flight apps, handwritten notes, CSV exports, finance records, and someone's memory.

That patchwork works for a while. Then the operation grows. Jobs overlap, more pilots touch the same aircraft, and simple questions start taking too long to answer. Which drone earns the most? Which battery is becoming unreliable? Which site types create the most follow-up maintenance? A proper fleet management dataset is how you stop guessing and start running drone operations like a business.

The Foundation of Modern Drone Operations

Most drone teams don't start with a dataset. They start with a few flights, a simple spreadsheet, and a rough habit of logging what matters. Then they add another aircraft, another pilot, another client requirement, and the cracks show quickly.

A flight log alone isn't enough. It tells you where and when a drone flew. It usually doesn't connect that mission to the battery used, the pilot assigned, the maintenance triggered afterward, the airspace constraints encountered, or the revenue tied to the work. A fleet management dataset is broader. It links assets, people, missions, compliance, maintenance, and costs into one operational record.

Why the shift matters

Ground fleet management has already shown where this is heading. The U.S. fleet management market was valued at $19.47 billion USD in 2020 and is projected to reach $52.5 billion by 2030, reflecting a compound annual growth rate of 10.6%, according to IntelliShift's fleet management statistics. For drone operators, that matters because the same operational pressure exists in the air. More assets. More telemetry. More accountability.

The difference is that most traditional fleet resources assume trucks, vans, and fixed routes. Drone teams deal with payload changes, battery rotation, weather sensitivity, pilot recency, firmware differences, and airspace restrictions. A survey company flying roof inspections doesn't manage risk the same way as a haulage business. If your work includes property inspections, How Drone Property Surveys Are Revolutionising The Uk Housing Market is a useful example of how drone outputs are becoming part of mainstream professional workflows. Once that happens, clients expect the same professionalism in your records as they do in your imagery.

Practical rule: If you can't trace a mission from booking to aircraft to battery to pilot to report to invoice, you don't have an operational dataset. You have fragments.

What a real drone dataset changes

With a connected dataset, teams stop reacting late. They can spot patterns before they become expensive. Repeated hard landings can be tied to a specific aircraft. A battery with falling reliability can be retired before it causes a field failure. A client sector that looks profitable on paper can be exposed as margin-poor once travel, repeat visits, and maintenance are linked properly.

That changes how you scale:

- Safety improves because maintenance isn't based on memory.

- Compliance gets easier because pilot, aircraft, and mission records line up.

- Profitability becomes visible because costs attach to actual work.

- Client reporting gets stronger because evidence is already structured.

The difference between hobby logging and operations management

Hobbyists can tolerate missing fields. Commercial operators can't. If your team is bidding for repeat inspection work, utility contracts, mapping jobs, or recurring media shoots, the dataset becomes part of delivery quality. It helps you prove reliability internally and externally.

A mature drone operation doesn't ask, "Did we log the flight?" It asks, "Can we use this record to make the next decision better?" That's the primary purpose of a fleet management dataset.

Designing Your Core Fleet Management Dataset

The hardest part isn't collecting data. It's deciding what deserves a permanent place in the system. If the schema is too thin, you can't answer serious operational questions. If it's too bloated, pilots stop entering data properly and the whole thing becomes noisy.

For drone teams, I recommend building the dataset around six entities: Drones, Batteries, Equipment, Personnel, Missions, and Maintenance Logs. That structure is flexible enough for a solo operator and disciplined enough for a larger team. It also handles a common drone problem that trucking datasets often miss. Many small drone operators struggle with integrating legacy equipment, like older DJI models, into modern datasets, and there's a real gap around drone-specific logs such as flight hours and battery cycles, as noted in the SCITEPRESS paper on fleet data integration gaps.

Start with entities, not apps

Software changes. Your data model should last longer than the tool you're using today. Define the entities first, then map tools and imports into that structure.

Here is a practical baseline.

| Entity | Field Name | Data Type | Example | Description |

|---|---|---|---|---|

| Drone | drone_id | Text | DRN-001 | Unique internal ID for each aircraft |

| Drone | manufacturer | Text | DJI | Maker of the aircraft |

| Drone | model | Text | Mavic 3 Enterprise | Specific aircraft model |

| Drone | serial_number | Text | SN-AX45 | Manufacturer serial |

| Drone | purchase_date | Date | 2024-03-10 | Asset acquisition date |

| Drone | status | Text | active | Active, grounded, retired |

| Drone | firmware_version | Text | v01.02 | Current firmware state |

| Drone | total_flight_hours | Decimal | 126.4 | Lifetime aircraft hours |

| Battery | battery_id | Text | BAT-014 | Unique internal battery ID |

| Battery | linked_drone_model | Text | Mavic 3 Enterprise | Compatible aircraft model |

| Battery | purchase_date | Date | 2024-04-02 | Acquisition date |

| Battery | total_charge_cycles | Integer | 87 | Charge cycle count |

| Battery | last_health_check | Date | 2026-01-14 | Last inspection date |

| Battery | status | Text | in_service | In service, monitor, retire |

| Equipment | equipment_id | Text | EQ-009 | Accessory or payload ID |

| Equipment | type | Text | RTK module | Equipment class |

| Equipment | assigned_to | Text | DRN-001 | Linked drone or person |

| Personnel | person_id | Text | PILOT-03 | Unique personnel ID |

| Personnel | full_name | Text | John Doe | Standardized person name |

| Personnel | role | Text | Pilot | Pilot, observer, manager |

| Personnel | certification_status | Text | current | Current training or authorization status |

| Mission | mission_id | Text | MIS-2026-041 | Unique mission record |

| Mission | client_name | Text | North Ridge Surveys | Customer associated with mission |

| Mission | site_name | Text | West Substation | Mission location label |

| Mission | pilot_id | Text | PILOT-03 | Assigned pilot |

| Mission | drone_id | Text | DRN-001 | Aircraft used |

| Mission | battery_ids | Text | BAT-014;BAT-018 | Batteries used on mission |

| Mission | mission_type | Text | inspection | Survey, inspection, media, mapping |

| Mission | mission_date | Date | 2026-02-18 | Date flown |

| Mission | flight_duration_minutes | Decimal | 38.5 | Total duration |

| Mission | outcome | Text | completed | Completed, aborted, rescheduled |

| Maintenance Log | maintenance_id | Text | MNT-220 | Unique maintenance event |

| Maintenance Log | asset_type | Text | battery | Drone, battery, controller, payload |

| Maintenance Log | asset_id | Text | BAT-014 | Asset receiving maintenance |

| Maintenance Log | issue_reported | Text | voltage irregularity | Problem observed |

| Maintenance Log | action_taken | Text | removed from rotation | Action completed |

| Maintenance Log | performed_by | Text | TECH-01 | Responsible person |

| Maintenance Log | maintenance_date | Date | 2026-02-19 | Service date |

What to track that most teams miss

Drone operations create component-level wear that generic vehicle schemas often ignore. That matters. A drone can be technically available while still carrying hidden risk in a specific part.

Include fields or linked records for:

- Propeller usage history so you can spot repeated replacements on one airframe.

- Gimbal service events because image quality issues often start there, not in the camera body.

- Controller assignment to show which pilot flew with which controller during an incident.

- Payload pairing so survey outputs can be traced to the exact sensor setup used.

- Firmware state at mission time because troubleshooting without version history is guesswork.

A clean dataset doesn't try to capture everything. It captures the few details that explain failure, cost, and performance.

JSON example for a mission record

A JSON structure works well when you're ingesting data from apps, APIs, or export jobs.

{

"mission_id": "MIS-2026-041",

"mission_date": "2026-02-18",

"client_name": "North Ridge Surveys",

"site_name": "West Substation",

"mission_type": "inspection",

"pilot": {

"person_id": "PILOT-03",

"full_name": "John Doe"

},

"drone": {

"drone_id": "DRN-001",

"model": "Mavic 3 Enterprise",

"firmware_version": "v01.02"

},

"batteries_used": [

"BAT-014",

"BAT-018"

],

"outcome": "completed",

"notes": "Minor wind increase during final leg",

"follow_up_maintenance_required": false

}CSV example for a simple starting point

If you're still early in the process, CSV is fine. Keep the structure flat and consistent.

mission_id,mission_date,client_name,site_name,pilot_id,drone_id,battery_ids,mission_type,flight_duration_minutes,outcome

MIS-2026-041,2026-02-18,North Ridge Surveys,West Substation,PILOT-03,DRN-001,"BAT-014;BAT-018",inspection,38.5,completed

MIS-2026-042,2026-02-19,Oakline Media,City Centre Roof,PILOT-02,DRN-004,"BAT-021",media,21.0,completedDesign for change

Older aircraft, retrofitted processes, and mixed manufacturers make rigid schemas fail quickly. Use required fields for identifiers and dates, but keep room for optional drone-specific metadata. Free text is useful for notes, but not for core fields like pilot name, mission type, or maintenance status. Those need controlled values.

If you're evaluating structure at a broader operational level, this guide to a UAS fleet management system is a helpful companion because it frames the dataset as part of the operating model, not just a storage problem.

The goal isn't to build a perfect database on day one. It's to create a structure that still makes sense when you double the fleet and everyone is too busy to remember what happened last Tuesday.

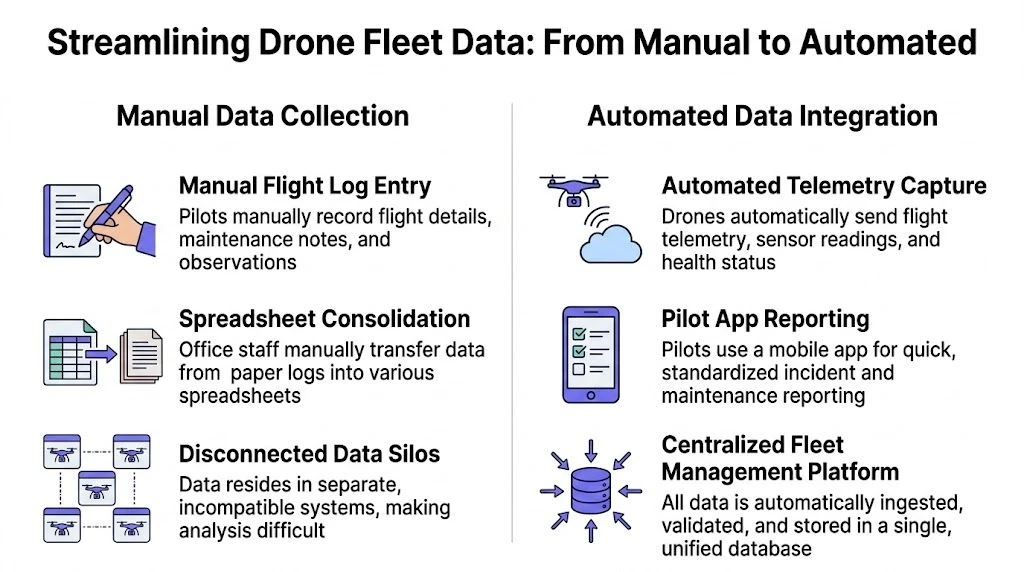

Streamlining Data Collection and Integration

Most bad datasets are created by reasonable people using bad collection methods. A pilot finishes a job and writes notes in one app. Maintenance goes into a shared spreadsheet. Battery issues get mentioned in chat. Finance tracks job profitability somewhere else. After a few months, the team has plenty of data and very little truth.

That problem isn't unique to drones, but drones feel it fast because so much of the operation depends on timing, equipment pairing, and location context. In broader fleet environments, data integration challenges such as siloed GPS telematics and maintenance logs lead to incompleteness and inconsistency affecting 94% of spreadsheet-based datasets, and centralizing data with standardized KPIs can reduce downtime by 20-30% and boost analytics accuracy to 85%, according to GoCodes fleet management statistics.

Manual collection versus integrated collection

The trade-off is simple. Manual systems feel cheap at the start and become expensive later. Integrated systems take more setup discipline and pay back in cleaner operations.

| Approach | What it looks like in practice | What works | What breaks |

|---|---|---|---|

| Manual logging | Pilots enter mission notes by hand after each job | Flexible for edge cases and unusual missions | Inconsistent naming, skipped fields, late entry |

| Spreadsheet consolidation | Admin staff merge logs, maintenance, and cost data weekly | Easy to start with familiar tools | Version conflicts, duplicate records, poor traceability |

| Automated sync | Flight logs and telemetry flow into a central platform | Better consistency and timeliness | Needs planning around field mapping and exceptions |

| Hybrid workflow | Automation for core records, manual forms for incidents and notes | Usually the most practical setup | Can drift if standards aren't enforced |

What automation should handle

Automation is best used for repetitive, machine-generated records. Don't waste pilot time typing data that already exists in telemetry or mission history.

Use automation for:

- Flight telemetry capture from the aircraft or connected ecosystem.

- Mission timestamps so takeoff and landing records stay consistent.

- Asset association when the system can identify which aircraft was flown.

- Standard maintenance triggers after defined events or thresholds.

- Export routines for finance or client reporting.

Keep manual entry for the things software can't infer cleanly:

- Field observations such as unusual wind, signal loss, or access constraints.

- Incident detail that needs human narrative.

- Commercial context such as client approvals, change requests, or reschedules.

Field lesson: The best workflow isn't fully automated. It's selective. Let systems collect what they know, and let people record what only they saw.

Set standards before you scale

The painful cleanup usually starts with labels. One pilot writes "Substation West", another writes "west sub", and a third writes the client site code. Those all refer to the same place, but your dashboard won't know that.

Set standards early for:

- Mission naming

- Pilot names and IDs

- Aircraft IDs

- Battery IDs

- Client and site names

- Outcome categories

- Maintenance status terms

This isn't glamorous work, but it prevents analysis from collapsing later.

A good parallel exists outside aviation. Teams wrestling with invoices, coding, and reconciliations often hit the same bottleneck, which is why operational leaders looking at process discipline can learn from adjacent systems like automation in accounting. The pattern is familiar. Standard inputs create trustworthy outputs.

Privacy and access control

Drone data often contains more sensitive information than teams realize. Site coordinates can reveal client assets. Pilot records contain personal information. Imagery metadata may expose location and timing. If the dataset is shared loosely, you create compliance and contractual risk.

A practical access model keeps different views for different users:

- Pilots should see assigned missions, their own logs, and relevant equipment status.

- Operations managers need full fleet visibility.

- Maintenance staff need asset and issue histories.

- Finance staff usually need cost and job linkage, not all flight detail.

- Clients should receive only curated reporting outputs.

If you're reviewing workflow architecture in more depth, this article on data integration best practices is useful because it focuses on keeping systems connected without letting the data model become chaotic.

What works in the field

The collection pipeline that tends to hold up is straightforward. Automated import handles telemetry and flight records. Pilots complete a short standardized post-mission form. Maintenance events create linked records against the drone, battery, or payload involved. Cost data is attached at the mission or job level, not left in a separate finance silo.

What doesn't work is asking busy pilots to become data clerks. If an important field takes too long to enter, it won't be entered consistently. Design the process for the day you're busiest, not the day you're setting up the system.

From Raw Telemetry to Actionable Insights

Raw drone data is messy. That's normal. Flight records come in with different naming conventions, pilots abbreviate things differently, batteries get entered with missing IDs, and imported telemetry often contains edge cases that don't fit your neat schema. The teams that get value from a fleet management dataset aren't the ones with perfect data. They're the ones with disciplined cleaning rules.

Clean identifiers first

Start with fields that connect records together. If IDs are inconsistent, nothing downstream is reliable.

A practical order looks like this:

-

Standardize pilot names

Convert "John D.", "Doe, John", and "john doe" into one canonical record tied to a single person ID. -

Normalize aircraft and battery IDs

If one battery appears as "bat14", "BAT-14", and "battery 14", merge them into one format. -

Standardize mission types

Reduce variations like "roof survey", "survey-roof", and "property inspection" into controlled categories that still serve the business. -

Align dates and times

Pick one time zone policy and stick to it.

A lot of operational reporting problems are really identity problems in disguise.

Handle missing and conflicting values

Not every blank field should be filled automatically. Some missing values are safe to infer. Others should be flagged and reviewed.

Use simple rules:

- Infer only when the source is strong. If a mission record clearly ties to one aircraft and one pilot in imported telemetry, you can populate those fields confidently.

- Flag maintenance-critical blanks. Missing battery IDs, incomplete incident logs, or absent maintenance outcomes should go into a review queue.

- Preserve original values. Keep the raw imported field somewhere, even after you standardize it.

Clean data isn't data that looks tidy. It's data you can defend when something goes wrong.

Standardize units and operational language

Drone datasets get messy when one pilot records altitude in feet, another works in meters, and a third enters free-text notes like "high". Controlled vocabularies matter more than teams expect.

Useful normalization checks include:

- Units of measure for altitude, speed, and duration

- Status values such as active, grounded, retired

- Mission outcomes such as completed, aborted, rescheduled

- Issue categories such as battery, propeller, gimbal, controller, weather, access

The aim isn't to erase field nuance. It's to make recurring patterns analyzable.

Find outliers before they become decisions

Outliers can be real events or data errors. Treat them as both until proven otherwise.

Examples worth reviewing:

| Data point | Possible issue |

|---|---|

| Extremely short mission duration | Failed sync, aborted launch, or duplicate record |

| Battery linked to overlapping missions | Data entry error or incorrect asset assignment |

| Aircraft with no maintenance history | Missing records, not perfect reliability |

| Pilot with multiple name variants | Duplicate personnel records |

| Flight durations with mixed formatting | Manual entry inconsistency |

A simple rule helps. If a value would change a maintenance, compliance, or profitability decision, review it manually.

Build a repeatable cleaning workflow

A one-off cleanup is useful. A repeatable cleaning routine is what makes the dataset durable.

Use a recurring process:

- Ingest raw records from telemetry, forms, and admin systems.

- Validate required fields and ID formats.

- Normalize names, categories, and units.

- Flag exceptions for human review.

- Publish a cleaned dataset for reporting and dashboards.

If you want a practical reference for interpreting operational records after cleanup, this guide to flight data analysis is worth keeping nearby.

The key is to separate raw data from reporting-ready data. Don't overwrite the original import just because you've standardized it. Keep the audit trail. In drone operations, that matters not only for analytics, but also for incident review, client queries, and internal accountability.

Unlocking Value with Analytics and Visualization

Once the dataset is clean, the interesting questions get easier. Not easy, but easier. You can stop asking "what happened?" and start asking "what keeps happening?" That's where a fleet management dataset starts earning its keep.

This is also where drone operations diverge from truck-focused analytics. In broader fleet settings, predictive models often assume road usage patterns. Drone operators face interruptions that generic telematics doesn't see. While trucking fleets use predictive analytics to reduce downtime, drone operators report 20-30% idle time due to airspace restrictions and other unique factors. A dedicated drone fleet dataset can identify those inefficiencies and improve utilization in ways generic telematics can't, as described in Intangles' digital twin discussion.

Three operations, three different questions

A survey team usually cares about repeatability and asset readiness. Their dashboard should surface which aircraft are most dependable for long technical jobs, which batteries are drifting toward retirement, and which sites generate the most maintenance follow-up.

A utility inspection team cares about interruptions. They need to know which jobs stall because of access windows, airspace limitations, or recurring equipment issues. Utilization alone can mislead them if the aircraft is available but the operating environment isn't.

A videography team often needs to understand margin at the project level. One aircraft may look busy but still be underperforming commercially if travel time, setup complexity, and redo work aren't linked back to the mission set.

Good analytics don't start with charts. They start with operational questions your team keeps asking out loud.

Example queries that matter

You don't need complicated models to get useful answers. Simple SQL-like logic is enough to build a strong first dashboard.

Find the most utilized drones

SELECT drone_id, SUM(flight_duration_minutes) AS total_minutes

FROM missions

WHERE outcome = 'completed'

GROUP BY drone_id

ORDER BY total_minutes DESC;Find assets with recurring maintenance events

SELECT asset_id, COUNT(*) AS maintenance_events

FROM maintenance_logs

GROUP BY asset_id

ORDER BY maintenance_events DESC;Spot batteries that may need review

SELECT battery_id, total_charge_cycles, status

FROM batteries

WHERE status IN ('monitor', 'retire');Estimate mission profitability

SELECT mission_id, client_name, revenue, direct_cost, (revenue - direct_cost) AS gross_margin

FROM mission_financials

ORDER BY gross_margin ASC;Show pilot safety patterns

SELECT pilot_id, COUNT(*) AS incidents_logged

FROM incident_reports

GROUP BY pilot_id

ORDER BY incidents_logged DESC;What to put on the dashboard

The best drone dashboards are boring in a good way. They answer recurring management questions fast.

A useful layout usually includes:

- Fleet status panel showing active, grounded, and under-review assets

- Utilization trend by aircraft and by client type

- Maintenance watchlist for drones, batteries, and payloads

- Pilot activity panel with currency, recent missions, and incident flags

- Commercial view connecting missions to cost and revenue

- Operational friction map highlighting cancellations, airspace issues, and repeat site problems

Different roles should see different versions of the same truth. Pilots don't need full financial reporting. Finance doesn't need every telemetry detail. Operations leadership needs both summarized properly.

Visual patterns worth watching

Over time, a few patterns tend to reveal themselves:

- One drone does too much work because the team trusts it most. That creates concentration risk.

- Certain sites trigger repeat issues because of access, signal conditions, or environmental constraints.

- Specific payload combinations produce more follow-up maintenance.

- Some pilots generate cleaner operational records because their workflows are more disciplined, not just their flying.

Those patterns are useful because they support action. Rotate aircraft more intelligently. Adjust scheduling buffers. Retire weak batteries earlier. Standardize pre-flight checks based on recurring failure points.

Predictive thinking without overcomplicating it

You don't need a digital twin to act predictively. If the dataset shows that a battery has a growing pattern of reliability concerns, remove it before it becomes a field problem. If one model needs repeated gimbal attention after a certain style of mission, inspect it sooner. If one client category causes more aborted or delayed operations, price that risk properly.

The practical value of analytics is simple. It helps you place the right aircraft, with the right battery, under the right pilot, on the right job, with fewer surprises.

Building Your Data-Driven Drone Program

Professional drone operations don't become mature because they buy more aircraft. They become mature because they can explain, in records, how those aircraft are used, maintained, staffed, and monetized. That's what a fleet management dataset gives you.

The sequence is straightforward. Build a sensible schema. Collect data in a way that doesn't burden pilots unnecessarily. Clean it before anyone trusts a dashboard. Then use it to make operational decisions that reduce noise and improve consistency. None of that is glamorous, but it works.

What this changes in practice

A data-driven program is easier to run because fewer decisions depend on memory. It becomes easier to prepare for audits, answer client questions, track battery history, and justify equipment replacement. It also becomes easier to spot where the business is leaking time or margin.

That applies whether you're flying solo or managing a distributed team.

- Solo operators gain structure. Jobs stop living across notes apps, SD cards, and scattered exports.

- Small teams gain consistency. Everyone records work the same way.

- Enterprise teams gain control. Fleet-level visibility becomes realistic instead of aspirational.

The strongest argument for building a fleet management dataset isn't reporting. It's fewer operational surprises.

What not to do

A few mistakes come up repeatedly:

- Don't start with every field imaginable. Start with the fields tied to safety, maintenance, utilization, and commercial performance.

- Don't trust spreadsheets forever. They help you begin. They rarely help you scale.

- Don't let free text replace controlled fields. Notes are useful, but they aren't a reliable reporting model.

- Don't separate operations from finance. If mission records and costs never meet, profitability stays fuzzy.

A practical standard to aim for

A good drone operation should be able to answer these questions quickly:

- Which aircraft and batteries were used on a mission?

- Who flew it, and under what operational context?

- What maintenance followed?

- What did the mission cost?

- Was the work commercially worthwhile?

- What should change before the next job?

If those answers take hours to assemble, the data model still needs work.

The good news is that this isn't reserved for large teams with technical staff. A solo pilot can build these habits. A small survey company can standardize records. A larger program can unify teams that currently operate in silos. The dataset is the lever. Once it's in place, better reporting, better maintenance planning, and better asset utilization follow naturally.

If you want to spend less time stitching together spreadsheets and more time running flights, Dronedesk is built for exactly this kind of operational discipline. It brings planning, logging, fleet records, team management, reporting, and direct DJI syncing into one platform, so your drone data is usable from day one instead of becoming an admin project you never quite finish.

How Enterprise Drone Teams Standardise Safe Operations →

How Enterprise Drone Teams Standardise Safe Operations → How to Start a Drone Photography Business in 2026 →

How to Start a Drone Photography Business in 2026 → Drone Mapping and Surveying Workflow Tips for Better Results →

Drone Mapping and Surveying Workflow Tips for Better Results → Commercial Drone Pilot Licence Options Explained →

Commercial Drone Pilot Licence Options Explained → Commercial Drone Licence: What Operators Need to Know →

Commercial Drone Licence: What Operators Need to Know → FAA Part 107 Licence Cost: What Drone Pilots Pay in 2026 →

FAA Part 107 Licence Cost: What Drone Pilots Pay in 2026 → How to Win More Work as a Drone Service Provider →

How to Win More Work as a Drone Service Provider → Your UAS Pilot Logbook: A Complete Guide for 2026 →

Your UAS Pilot Logbook: A Complete Guide for 2026 → Drone Control Software: What It Does and Who Needs It →

Drone Control Software: What It Does and Who Needs It → Flight Computer Manual: Master Drone Operations →

Flight Computer Manual: Master Drone Operations →