Top Quality Assurance Best Practices for Drone Ops in 2025

In the rapidly advancing world of commercial drone operations, success is measured not just by flight hours, but by the reliability, safety, and compliance of every single mission. As enterprises increasingly rely on UAVs for critical tasks, from infrastructure inspection and agricultural surveying to emergency response and media production, the need for robust quality assurance has never been more critical. A single oversight can lead to equipment loss, mission failure, or significant safety incidents, making a structured approach to quality essential.

This guide moves beyond generic advice to outline eight essential quality assurance best practices specifically tailored for drone operations management. We will dive into actionable strategies that cover the entire lifecycle of a mission, from pre-flight safety protocols and compliance workflows to post-flight analysis and continuous process improvement. Implementing these practices will help your organization mitigate risks, enhance operational efficiency, and build a solid foundation of trust with clients and regulators alike.

You will learn how to implement practical frameworks that ensure every flight is planned, executed, and reviewed with precision. Whether you are a solo operator aiming for professional excellence or a manager overseeing a large fleet, mastering these QA principles is the key to unlocking sustainable growth and operational superiority. This list provides the specific, actionable insights needed to establish a comprehensive quality management system that ensures safety and delivers consistent, high-quality results on every project.

1. Shift-Left Testing

Shift-left testing is a foundational quality assurance best practice that integrates testing activities earlier into the drone operations management software development lifecycle (SDLC). Instead of treating QA as a final gate before deployment, this approach "shifts" it to the left, embedding quality checks from the very beginning, starting with requirements and design. For drone operations, this means identifying potential issues in mission planning, data processing workflows, or compliance reporting before a single line of code is written, drastically reducing the cost and complexity of fixes.

The core principle is proactive defect prevention over reactive defect detection. By involving QA professionals in initial planning, you can challenge assumptions and foresee operational edge cases. For instance, a QA analyst might question how a flight planning module handles sudden changes in restricted airspace data, a consideration developers might overlook until late-stage testing. This early and continuous feedback loop ensures that quality is a shared responsibility across the entire team, not just the QA department's burden.

Why It's a Top Practice

Adopting a shift-left strategy is crucial because the later a bug is found, the more expensive it is to fix. A flaw discovered in the requirements phase might take minutes to correct, while the same flaw found post-deployment could require extensive code rewrites, data migration, and emergency patches, potentially grounding an entire fleet of drones.

Key Insight: Shifting left transforms QA from a cost center focused on finding bugs into a value-driver focused on preventing them, directly improving reliability and operational uptime for drone missions.

Tech giants have demonstrated its power: Microsoft’s adoption of shift-left practices led to a significant reduction in their bug escape rate, while IBM saw a substantial drop in defect density by integrating testing earlier. For drone service providers, this translates to more reliable software for mission-critical tasks like infrastructure inspection and agricultural surveying.

How to Implement Shift-Left Testing

Integrating this approach requires a cultural and procedural shift. Here are actionable steps to get started:

- Involve QA in Planning: Invite QA team members to requirements gathering and design review meetings. Their unique perspective helps identify ambiguities and potential failure points in proposed drone flight plans or data management features.

- Invest in Developer Testing: Train developers on unit testing and integration testing frameworks. When developers write their own tests, they catch bugs immediately, leading to cleaner code handoffs.

- Automate Early: Implement static code analysis tools directly within the developer's Integrated Development Environment (IDE). These tools automatically flag coding errors, security vulnerabilities, and style inconsistencies as code is being written.

- Establish Clear Standards: Create and distribute comprehensive testing guidelines and quality checklists. This ensures everyone, from developers to product managers, understands the quality expectations for features related to flight logging, pilot credential management, and asset tracking.

2. Risk-Based Testing

Risk-based testing is a strategic quality assurance best practice that prioritizes testing efforts based on the potential impact and likelihood of failure in different areas of the drone operations management software. Instead of trying to test everything equally, this approach focuses resources on the most critical functionalities, such as flight control systems, battery life monitoring, and geofencing compliance, ensuring that the highest-risk components receive the most rigorous scrutiny.

This methodology involves a systematic process of identifying, analyzing, and prioritizing potential risks associated with the software. For a drone platform, a high-risk scenario could be a glitch in the "return-to-home" feature, while a low-risk issue might be a minor UI typo in the pilot logbook. By quantifying these risks, QA teams can allocate their limited time and budget to prevent catastrophic failures, rather than getting bogged down by trivial defects.

Why It's a Top Practice

Adopting a risk-based approach is vital because it provides a logical and defensible framework for making tough testing decisions. It's impossible to test every conceivable user path and data combination, especially in complex systems managing drone fleets. This practice ensures that your most significant vulnerabilities are addressed first, directly enhancing safety and operational reliability.

Key Insight: Risk-based testing optimizes QA effectiveness, ensuring that testing efforts are concentrated on features that pose the greatest threat to operational safety, data integrity, and business continuity if they were to fail.

Pioneered by industry leaders like Dorothy Graham and standardized by bodies like the ISTQB, this method is used extensively in high-stakes industries. Banking applications use it to secure payment processing, and healthcare systems apply it to protect patient data. For drone operations, it means prioritizing the features that prevent flyaways, ensure regulatory compliance, and guarantee mission success.

How to Implement Risk-Based Testing

Implementing this strategy requires collaboration between QA, developers, and business stakeholders to identify and agree upon key risks. Here are actionable steps to get started:

- Conduct Stakeholder Risk Workshops: Involve drone pilots, operations managers, and compliance officers in a formal risk identification session. Their frontline experience is invaluable for identifying what could go wrong in real-world scenarios, from GPS signal loss to incorrect payload data entry.

- Create a Risk Matrix: Develop a matrix that scores risks based on their likelihood (how often might it occur?) and impact (how severe are the consequences?). This visual tool helps prioritize testing for high-likelihood, high-impact features. For more detailed guidance, explore these risk assessment checklists for drone operations.

- Tailor Test Design to Risk Levels: Design more exhaustive and intensive tests for high-risk areas. For example, the automated flight plan execution module should undergo rigorous integration, performance, and failure-recovery testing, while a low-risk reporting feature might only require basic functional checks.

- Continuously Review and Adjust: Risks are not static. Regularly revisit your risk assessment, especially after software updates, incident reports, or changes in aviation regulations, to ensure your testing strategy remains aligned with the current operational landscape.

3. Test Automation Pyramid

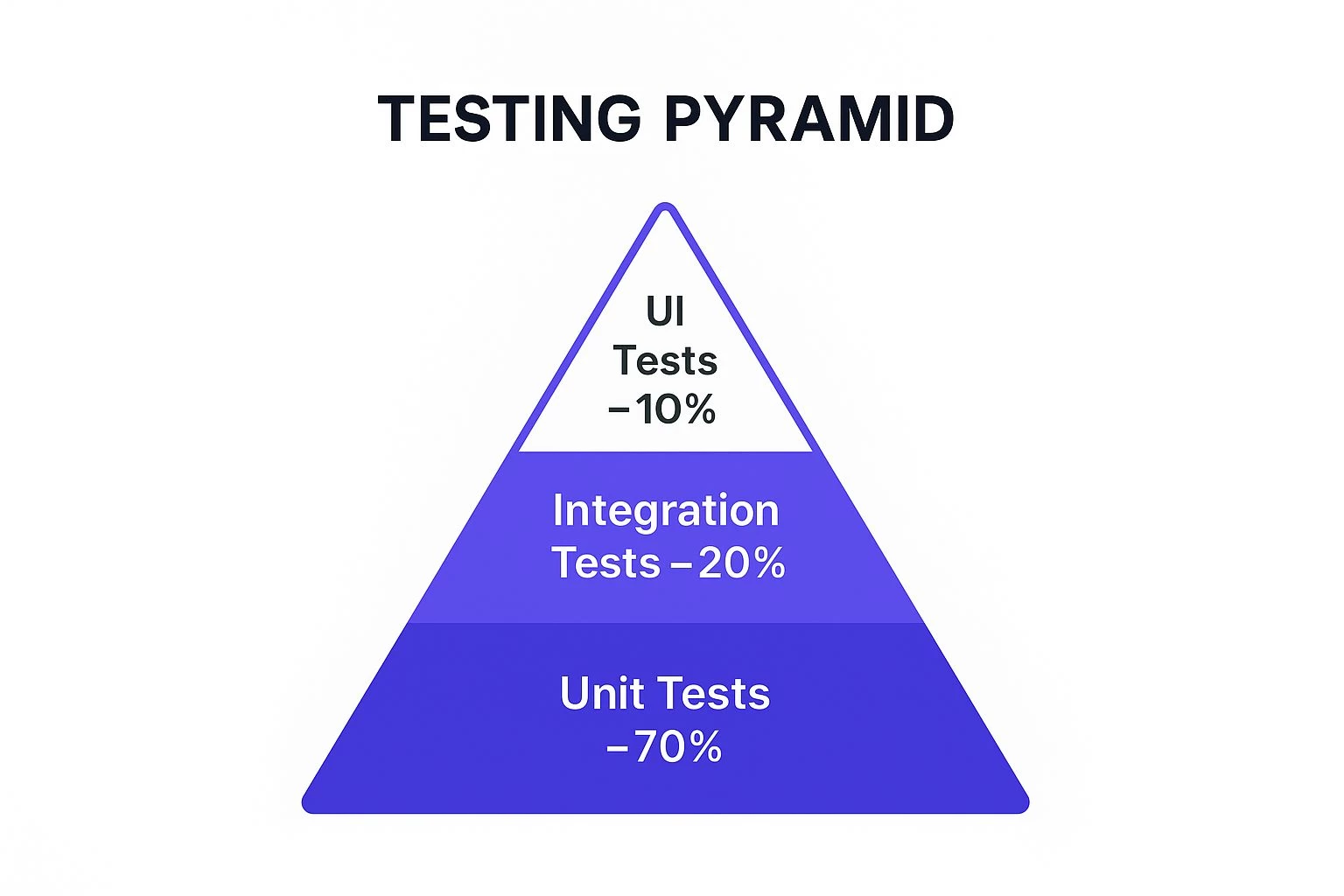

The Test Automation Pyramid is a strategic framework that guides one of the most effective quality assurance best practices: structuring your automated tests for maximum efficiency and stability. Popularized by experts like Mike Cohn, it advocates for a specific distribution of test types. The model suggests building a large, solid base of fast and isolated unit tests, a smaller middle layer of integration tests, and a very small top layer of slow, end-to-end UI tests. For drone operations software, this prevents an over-reliance on brittle UI tests for critical functions like pre-flight checklists or airspace authorization requests.

This hierarchical diagram visualizes the recommended distribution of automated tests, with a vast majority of tests at the unit level. The pyramid structure emphasizes that focusing on unit tests creates a stable foundation, while minimizing slow UI tests leads to a faster, more reliable, and less expensive testing process.

Instead of a top-heavy, slow, and flaky test suite (an anti-pattern known as the "Ice Cream Cone"), the pyramid model ensures rapid feedback. Unit tests run in milliseconds, allowing developers to catch bugs instantly. This approach balances comprehensive coverage with the need for speed, which is essential for agile development and continuous delivery pipelines managing complex drone mission data.

Why It's a Top Practice

Adhering to the Test Automation Pyramid is crucial because it directly tackles the biggest challenges in test automation: speed, cost, and reliability. End-to-end UI tests are notoriously slow to run and expensive to maintain, as minor UI changes can break them. A suite built primarily on fast unit tests provides quicker feedback, reduces maintenance overhead, and pinpoints failures more accurately.

Key Insight: The Test Automation Pyramid isn't just a testing strategy; it's a risk management model. It allocates the most testing resources to the code foundation, ensuring core logic is solid before verifying complex user workflows.

Google famously follows a 70/20/10 split (70% unit, 20% integration, 10% UI tests), enabling them to deploy code with confidence at scale. For a drone platform, this means the logic for battery-level warnings or geofence compliance can be rigorously tested at the unit level, ensuring mission safety and reliability.

How to Implement the Test Automation Pyramid

Adopting this model requires a disciplined approach to test creation and team-wide buy-in. Here are actionable steps to build a healthy test suite:

- Build from the Bottom Up: Prioritize the creation of unit tests. Ensure developers write comprehensive unit tests for every new function or module, such as flight log parsing or pilot credential validation.

- Use Test Doubles Effectively: Use mocks and stubs to isolate the code under test during unit testing. This prevents dependencies on external systems (like a live weather data feed) and ensures tests are fast and deterministic.

- Focus Integration Tests on Boundaries: Reserve integration tests for critical interaction points, such as verifying that the flight planning module correctly communicates with the airspace data service or that mission data is successfully saved to the database.

- Limit UI Tests to Critical Journeys: Automate only the most critical end-to-end user workflows via the UI. For drone software, this might include the complete journey of "Create Mission -> Execute Flight -> Upload Data -> Generate Report."

4. Continuous Integration and Continuous Testing

Continuous Integration (CI) and Continuous Testing (CT) represent a modern development practice where code changes from multiple developers are automatically merged into a shared repository several times a day. Each integration triggers an automated build and a suite of tests, providing immediate feedback on the quality and stability of the new code. For drone operations management software, this means a bug in a new geofencing feature or a data synchronization module is identified within minutes of being committed, not weeks later during a manual QA phase.

This approach ensures the primary codebase, or "main branch," always remains in a stable, deployable state. By automating the build and initial testing process, CI/CT eliminates manual integration headaches and creates a rapid, reliable feedback loop. This agility is essential for drone software, where new compliance rules, drone models, or sensor types require frequent and dependable updates to keep operations running smoothly and safely.

Why It's a Top Practice

Implementing CI/CT is one of the most impactful quality assurance best practices because it dramatically accelerates the feedback cycle and reduces integration risks. Instead of large, complex merges that are difficult to debug, the team works with small, frequent changes that are easy to validate. This constant validation builds confidence in the codebase and enables faster, more frequent releases of valuable features to drone operators.

Key Insight: CI/CT transforms software integration from a high-risk, periodic event into a low-risk, routine part of the daily workflow, directly improving software reliability and development velocity.

This model is the backbone of modern tech giants. For example, Amazon and Netflix deploy changes thousands of times per day, a feat only possible through robust automated pipelines. For drone teams, this means new features for improved flight planning or faster data processing can be delivered quickly and reliably, enhancing operational efficiency.

How to Implement Continuous Integration and Continuous Testing

Adopting CI/CT requires the right tools and a commitment to automation. For a deeper dive into establishing robust CI/CD pipelines, explore more about effective continuous integration best practices. Here are the foundational steps:

- Set Up a CI Server: Use tools like Jenkins, GitLab CI, or CircleCI to automate the build process. Configure it to monitor your code repository and trigger a build whenever new code is pushed.

- Maintain a Fast Test Suite: Your automated tests must run quickly to provide immediate feedback. Prioritize critical unit, integration, and API tests that cover core functionalities like mission validation and logbook accuracy.

- Implement a Branching Strategy: Adopt a strategy like GitFlow or Trunk-Based Development to manage code changes cleanly. This ensures the main branch is always protected and stable.

- Use Feature Flags: Deploy new features behind feature flags. This allows you to integrate code into the main branch without making it visible to users, enabling testing in the production environment before a full release.

5. Behavior-Driven Development (BDD)

Behavior-Driven Development (BDD) is a collaborative approach that extends Test-Driven Development (TDD) by using natural, human-readable language to define software requirements. It bridges the communication gap between business stakeholders, developers, and QA engineers by creating a shared understanding of how a feature should behave from the user's perspective. For drone operations, this means defining a flight plan submission process not in technical jargon, but in a simple, structured language that everyone can agree on.

This methodology uses a specific format, often "Given-When-Then," to frame user stories or "scenarios." For example, a scenario for a pre-flight checklist feature might read: Given a pilot is at a registered flight location, When they initiate a pre-flight check, Then the system must display the geofence compliance status. These plain-language scenarios become executable specifications that double as automated tests, ensuring the final software behaves exactly as intended.

Why It's a Top Practice

BDD is a top quality assurance best practice because it anchors development and testing to tangible business outcomes. It ensures that the software being built directly addresses user needs and operational requirements, minimizing rework caused by misunderstandings. In the high-stakes world of drone operations, clarity on features like automated battery swap alerts or real-time flight data logging is non-negotiable.

Key Insight: BDD transforms testing from a technical validation exercise into a continuous conversation about user behavior, ensuring that development efforts are always aligned with real-world operational needs.

Industry leaders have validated this approach. The BBC successfully used BDD to develop its iPlayer, aligning complex technical requirements with a simple user experience. Similarly, Capital One adopted it to ensure its financial applications behaved predictably for customers. For drone software, this means building features that pilots intuitively understand and trust.

How to Implement Behavior-Driven Development

Adopting BDD requires a commitment to collaboration and a structured communication process. Here are actionable steps to implement it:

- Focus on Behavior, Not Implementation: When writing scenarios, describe what the system should do, not how it should do it. Instead of specifying a button click, describe the user's goal, such as "submitting a flight plan for approval."

- Involve All Stakeholders: Host "Three Amigos" sessions (product owner, developer, and QA tester) to collaboratively write and review scenarios. This ensures business logic, technical feasibility, and testability are all considered from the start.

- Use BDD Frameworks: Leverage tools like Cucumber or SpecFlow to turn your plain-text scenarios into automated tests. These frameworks parse the Given-When-Then structure and execute corresponding code to validate the application's behavior.

- Maintain Scenarios Actively: Treat your BDD scenarios as living documentation. Regularly review and update them during backlog grooming sessions to ensure they accurately reflect the current requirements for features like airspace advisories or pilot credential management.

6. Exploratory Testing

Exploratory testing is a powerful quality assurance best practice that leverages the tester's creativity, skill, and intuition. Unlike scripted testing, which follows predefined steps, this approach combines learning, test design, and execution into a simultaneous, dynamic process. For drone operations management, this means a skilled tester can "explore" the software like a pilot would, uncovering usability flaws or complex bugs in flight logging, data sync, or emergency response features that rigid test cases would likely miss.

The essence of exploratory testing is structured freedom. Testers actively investigate the application, using their knowledge to guide their next steps and discover potential issues. For instance, a tester might simulate a drone losing its GPS signal mid-mission and then immediately try to generate a compliance report, probing how the system handles incomplete or corrupted data. This unscripted, context-driven investigation is excellent for finding edge-case defects.

Why It's a Top Practice

Scripted tests are good at verifying known requirements, but they can’t find what you don’t think to look for. Exploratory testing excels at discovering these "unknown unknowns." In the high-stakes world of drone operations, a subtle bug in a geofencing module or a confusing UI for battery level warnings could have serious real-world consequences.

Key Insight: Exploratory testing empowers human intelligence to uncover complex, scenario-based bugs that automated scripts cannot, ensuring the software is not just functional but also robust and intuitive for drone pilots under pressure.

Companies like Microsoft have long used exploratory sessions to find critical bugs in their Windows operating system that automated checks failed to catch. For drone software, this practice directly improves safety and reliability by ensuring the platform can gracefully handle unexpected user actions and environmental factors.

How to Implement Exploratory Testing

Effective exploratory testing is not random; it requires structure and clear goals. Here are actionable steps to integrate this practice:

- Use Test Charters: Create brief, mission-focused "charters" to guide each testing session. For example, a charter could be: "Explore the pre-flight checklist feature and discover how it handles missing or invalid pilot credentials." This provides focus without being overly prescriptive.

- Document Findings in Real-Time: Equip testers with tools to log notes, screenshots, and videos as they work. This captures their thought process and makes it easier to reproduce bugs and share insights with developers.

- Combine with Scripted Testing: Use exploratory testing to complement, not replace, automated and manual scripted testing. Use scripted tests for regression checks and core functionality, while using exploratory methods to probe new features and complex workflows.

- Conduct Debrief Sessions: After a testing session (often time-boxed to 60-90 minutes), hold a debrief meeting. This allows the tester to share their findings, insights, and any new areas of risk they uncovered, informing future testing efforts.

7. Quality Gates and Definition of Done

Establishing quality gates and a clear Definition of Done (DoD) are essential quality assurance best practices that create mandatory checkpoints throughout the drone operations management software development lifecycle. Quality gates are specific, measurable criteria a feature must meet to advance to the next stage, while the DoD is a comprehensive checklist that confirms all necessary work for a task is complete. Together, they prevent defective code from moving downstream and ensure features are truly production-ready.

For drone operations, this means a new flight planning feature cannot move from development to testing until it passes a quality gate that verifies its unit tests cover 100% of critical path code. The feature’s DoD might require that it not only passes all automated tests but also has its user documentation updated and has been reviewed by a compliance expert. This framework enforces discipline and builds quality into the process, not just the product.

Why It's a Top Practice

This dual approach brings objectivity and clarity to the development process. It removes ambiguity about what "done" means and replaces subjective assessments with hard, verifiable metrics. For mission-critical software where a bug could lead to mission failure or compliance breaches, these checkpoints are non-negotiable. They act as an automated quality control system, ensuring consistent standards are met for every release.

Key Insight: Quality gates and a Definition of Done institutionalize quality, transforming it from a hopeful outcome into a required prerequisite for progress, which is vital for high-stakes drone operations.

Companies like Spotify successfully use quality gates to manage their complex microservices architecture, ensuring new services meet reliability and performance standards before deployment. Similarly, Scrum teams universally leverage a DoD to ensure shippable increments of software are produced consistently.

How to Implement Quality Gates and a DoD

Implementing these practices requires collaboration between development, QA, and product teams to define realistic yet rigorous standards.

- Define Clear, Measurable Criteria: Your quality gates should be unambiguous. Examples include "code coverage must exceed 85%," "zero critical security vulnerabilities detected by static analysis," or "API response time under 200ms."

- Automate Gate Checks: Integrate quality gate checks directly into your CI/CD pipeline using tools like SonarQube or Jenkins. This provides instant feedback and prevents manual overrides, making the process efficient and impartial.

- Create a Collaborative DoD: The entire team should agree on the Definition of Done. This checklist might include items like "code peer-reviewed," "all unit and integration tests passed," "user interface tested on target mobile devices," and "compliance documentation updated."

- Review and Adapt: Quality standards are not static. Regularly review the effectiveness of your gates and DoD. If bugs are still slipping through, your thresholds may be too low; if delivery slows to a crawl, they may be too strict.

8. Test Data Management

Test Data Management (TDM) is a critical quality assurance best practice focused on planning, creating, and managing the data used for testing. For drone operations management software, this means having access to realistic and varied data sets that mimic real-world scenarios, such as diverse flight logs, pilot certifications, equipment maintenance records, and complex mission plans. Instead of using ad-hoc or incomplete data, TDM establishes a systematic process to ensure testing is comprehensive, repeatable, and secure.

The core principle is to provide high-quality data that can effectively validate software functionality without compromising sensitive information. This involves creating, subsetting, masking, and maintaining data sets tailored to specific testing needs. For example, when testing a new compliance reporting feature, QA teams need access to a variety of pilot profiles with different certifications and flight hour totals to ensure the system correctly flags potential violations. TDM ensures this data is readily available and accurately reflects operational complexities.

Why It's a Top Practice

Effective Test Data Management is crucial because flawed or insufficient test data leads to missed defects, security vulnerabilities, and unreliable test results. Testing a drone fleet management feature with only a handful of generic drone profiles won’t uncover issues that arise when managing hundreds of assets with varying maintenance schedules and flight histories. Proper TDM directly improves test coverage and accuracy, catching bugs that simple data sets would miss.

Key Insight: TDM transforms testing from a superficial check into a robust simulation of real-world operations, ensuring the software is resilient enough to handle the scale and complexity of actual drone missions.

Leading enterprise testing teams in regulated industries like finance and healthcare have long relied on TDM to ensure compliance and system reliability. For drone service providers, this practice is equally vital. It prevents data privacy breaches by masking personally identifiable information (PII) from production data and enables the creation of synthetic data to test edge cases, like how the system handles GPS data from a drone operating in a challenging urban environment. Integral to effective test data management is robust data quality management, ensuring the accuracy and reliability of the data used in testing.

How to Implement Test Data Management

Building a TDM strategy requires a structured approach to handling your testing assets. Here are actionable steps to get started:

- Implement Data Masking: When using copies of production data for testing, apply data masking techniques to anonymize sensitive information like pilot names, client details, or specific locations. This ensures privacy and compliance with regulations like GDPR.

- Create Reusable Data Sets: Develop and store standardized, reusable test data sets for common scenarios, such as new pilot onboarding, pre-flight checklists, or post-mission data uploads. This speeds up the testing cycle and ensures consistency. For a deeper dive, explore these data management best practices.

- Use Synthetic Data Generation: Invest in tools that can generate realistic, synthetic data to test at scale or to cover scenarios not present in your production data. This is perfect for stress-testing how the platform handles thousands of simultaneous flight logs.

- Automate Data Refresh Processes: Establish automated scripts to refresh and clean up test environments after a test run. This prevents data contamination and ensures each test starts with a clean, known state, leading to more reliable results.

Quality Assurance Best Practices Comparison

| Item | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|---|---|---|---|---|---|

| Shift-Left Testing | Medium to High: Requires cultural shift and training | Skilled developers, test automation tools | Early defect detection, reduced bug-fixing costs, faster delivery | Projects needing high product quality and early QA involvement | Cost reduction, improved collaboration, reduced tech debt |

| Risk-Based Testing | Medium: Requires domain expertise, ongoing risk evaluation | Domain experts, stakeholder involvement | Focused testing on critical areas, optimized resource use | Business-critical applications with varied risks | Efficient resource use, better ROI, informed decisions |

| Test Automation Pyramid | High: Significant upfront unit test investment | Development resources for unit/integration tests | Faster feedback, reliable and maintainable test coverage | Continuous integration environments requiring fast tests | Speed, reliability, lower maintenance costs |

| Continuous Integration & Testing | High: Complex setup and process changes | Automation infrastructure, robust test suites | Early integration issue detection, faster delivery cycles | Teams practicing frequent code integration and delivery | Early defect detection, improved collaboration, fast delivery |

| Behavior-Driven Development (BDD) | Medium: Requires training and collaborative culture | Collaboration tools, stakeholder involvement | Clear requirements, living documentation, reduced ambiguity | Projects needing strong collaboration and clear behavior specs | Improved communication, understandable requirements |

| Exploratory Testing | Low to Medium: Less formal setup, depends on tester skill | Skilled testers with domain knowledge | Discovery of unexpected bugs, adaptive test coverage | Complex, dynamic applications where scripted tests fall short | High bug detection, immediate feedback, tester creativity |

| Quality Gates & Definition of Done | Medium: Requires setup of automated checks and agreement | Quality measurement tools, team buy-in | Consistent quality standards, prevents low-quality releases | Teams needing strict quality enforcement throughout lifecycle | Clear quality expectations, reduces tech debt |

| Test Data Management | High: Complex data provisioning, privacy compliance | Infrastructure for data storage, masking tools | Reliable test results, compliance, reduced environment setup time | Regulated industries and large-scale testing requiring real data | Data security, test reliability, environment synchronization |

Integrating Quality into Every Aspect of Your Drone Operations

Navigating the complexities of modern drone operations requires more than just skilled piloting and advanced hardware. It demands a foundational commitment to excellence, embedded within every flight, every report, and every client interaction. The journey through these quality assurance best practices, from shifting your focus left to the planning stages to implementing a robust Test Automation Pyramid, is not about adding more work. It’s about working smarter, safer, and more strategically.

By embracing concepts like Risk-Based Testing, you prioritize what truly matters, allocating your valuable resources to mitigate the most significant threats to your missions. Implementing Continuous Integration and Continuous Testing ensures that your workflows and software integrations remain reliable and efficient as your operations scale. This systematic approach transforms quality from a final inspection into a continuous, integrated process.

From Theory to Tangible Results

The real power of these methodologies is unlocked when they are applied consistently. Behavior-Driven Development (BDD) closes the gap between operational requirements and real-world execution, ensuring everyone from the pilot to the stakeholder is aligned. Exploratory Testing empowers your experienced operators to use their intuition to uncover unforeseen issues that rigid scripts might miss.

Defining clear Quality Gates and a comprehensive "Definition of Done" for each phase of an operation eliminates ambiguity and sets a consistent standard of excellence. Backing all of this with a sound Test Data Management strategy ensures that every decision is informed by accurate, reliable information. Each of these practices serves as a building block for a resilient, compliant, and highly professional drone program.

The Strategic Advantage of Proactive Quality

Ultimately, a deep integration of these quality assurance best practices provides a significant competitive advantage. It moves your operations from a reactive state, where you are constantly fixing problems, to a proactive one, where potential issues are identified and mitigated long before they impact a mission. This shift does more than just prevent accidents or ensure compliance; it builds a rock-solid reputation for reliability and professionalism.

This commitment to quality directly translates into tangible business benefits:

- Enhanced Client Trust: Consistently delivering safe, compliant, and high-quality results fosters loyalty and attracts high-value clients.

- Operational Efficiency: Standardized processes and automated checks reduce manual errors, minimize rework, and streamline workflows from pre-flight to final delivery.

- Improved Safety Culture: A structured quality framework reinforces safety as a core value, protecting your team, your equipment, and the public.

- Future-Proofing Your Business: As regulations evolve and technology advances, a strong QA foundation makes your operation agile and ready to adapt to new challenges and opportunities.

Adopting this mindset is an investment in the long-term health and success of your enterprise. It's about building a sustainable operation that not only meets today's standards but is also prepared to lead the industry tomorrow. Your dedication to quality will become your most valuable asset, setting you apart in a crowded marketplace and paving the way for sustainable growth.

Ready to put these quality assurance best practices into action with a platform built for professional drone operations? See how Dronedesk simplifies compliance, flight planning, and record-keeping, allowing you to embed quality into every step of your workflow. Explore the features at Dronedesk and take control of your operational quality today.

Drone Airspace Map Guide for Safer UK Flight Planning →

Drone Airspace Map Guide for Safer UK Flight Planning → How to Build a Drone Flight Risk Assessment That Works →

How to Build a Drone Flight Risk Assessment That Works → BVLOS Meaning Explained for Commercial Drone Teams →

BVLOS Meaning Explained for Commercial Drone Teams → Drone Mapping Companies: How to Choose the Right Partner →

Drone Mapping Companies: How to Choose the Right Partner → Why Drone Operations Management Software Beats Spreadsheets →

Why Drone Operations Management Software Beats Spreadsheets → How to Build a Drone Business Website That Wins Clients →

How to Build a Drone Business Website That Wins Clients → Drone Safety Checklist for Commercial Flights →

Drone Safety Checklist for Commercial Flights → Drone Risk Assessment Template for Commercial Operations →

Drone Risk Assessment Template for Commercial Operations → Drone Inspections: How to Plan Safer, Faster Site Visits →

Drone Inspections: How to Plan Safer, Faster Site Visits → How Enterprise Drone Teams Standardise Safe Operations →

How Enterprise Drone Teams Standardise Safe Operations →