EU AI Act Compliance: What We Did, What We Learned, and Why UK Businesses Need to Act Now

Earlier this month we attended a session run by the Department for Business and Trade's Explore AI Programme. It focused on the EU AI Act and what it means for UK companies with customers in Europe. We came away with a clearer picture of where we stood, and a long to-do list.

This post is about that process. What the EU AI Act actually requires, why it almost certainly applies to you if you have any EU clients, what we found when we looked honestly at our own AI use, and what we've done about it.

We're being transparent because we think that's the right thing to do. And because, frankly, most UK businesses haven't started this work yet.

What the EU AI Act Actually Is

The EU AI Act is the world's first comprehensive legal framework for artificial intelligence. It entered into force in August 2024, and like GDPR, it has extraterritorial reach. That means it applies to any business placing AI systems on the EU market or whose AI outputs are used in the EU, regardless of where that business is based.

UK company with six clients in the Nordics? The Act applies to you. UK SaaS platform used by a handful of European emergency services? Same.

The Act takes a risk-based approach. It classifies AI systems into four tiers: unacceptable risk (banned outright), high risk (strict obligations), limited risk (transparency requirements), and minimal risk (no specific obligations, but good practice applies).

The compliance timeline is phased:

- 2 February 2025: Prohibited AI practices banned. AI literacy obligation live.

- August 2026: High-risk AI system requirements in force.

- 9 December 2026: EU Product Liability Directive takes effect (AI software treated as a "product" under strict liability law).

That first date isn't in the future. It passed. The AI literacy obligation is already a legal requirement.

Why We Needed to Take This Seriously

Dronedesk is a UK-based B2B SaaS platform for professional drone operations management. We serve over 600 clients including enterprise organisations, critical national infrastructure companies, and emergency services across the UK, with around six clients in the EU (mainly the Nordics) and twelve in the US.

We have AI in the product. We use AI tools internally. Both of those facts matter under the EU AI Act, and they matter differently.

As a company that builds AI functionality into our platform, we're a provider under the Act. As a company that uses AI tools to run the business, we're also a deployer. Those two roles carry different obligations.

One thing caught our attention in particular: Article 25, sometimes called the "vendor trap". If you customise or fine-tune a third-party AI system beyond its intended purpose, you can be reclassified from deployer to provider, with all the heavier obligations that come with it. That's worth knowing before you start building prompts into workflows and calling it your own system.

Penalties under the Act run up to €35 million or 7% of global annual turnover, whichever is higher, plus the possibility of being publicly named. For a bootstrapped company of our size, that's existential.

What AI We Actually Use

Before we could do anything meaningful, we needed an honest inventory. Here's what that looked like.

In the Dronedesk platform

We have one AI-powered feature: a flight location risk assessment tool that calls the OpenAI API to generate an initial survey of potential risks and mitigation options for a given flight location.

It's user-invoked, not automatic. Account admins can disable it entirely. Everything it generates is fully editable by the operator. There's a disclaimer below the AI button: "ChatGPT makes mistakes. Check important info." The human operator always makes the final call on whether and how to fly.

No personal data goes to OpenAI, only location information. And OpenAI's terms confirm that input data isn't used to train their models.

We classified this feature as limited risk under the EU AI Act. It's in an aviation context, which initially made us look hard at the high-risk category, but the human oversight controls are strong enough to keep it in limited risk territory. We documented that rationale carefully.

We're providers of an AI system. That's a fact we're comfortable being transparent about.

Internal AI tools

Internally, the team uses generative AI tools including ChatGPT, Claude, Microsoft Copilot, and Gemini for drafting social media content, blog posts, website copy, and coding assistance.

We also use a range of business tools with embedded AI: our CRM (Twenty), email marketing platform (Encharge), outreach tooling (Manyreach), accounting software (Xero), customer support platform (Gleap), and content tools including Outrank and Notion.

All of these make us a deployer under the Act. None of them, based on our assessment, involve high-risk AI use.

What we don't do: no AI chatbot on the website, no AI in recruitment or HR, no automated decision-making about individuals, no biometric identification.

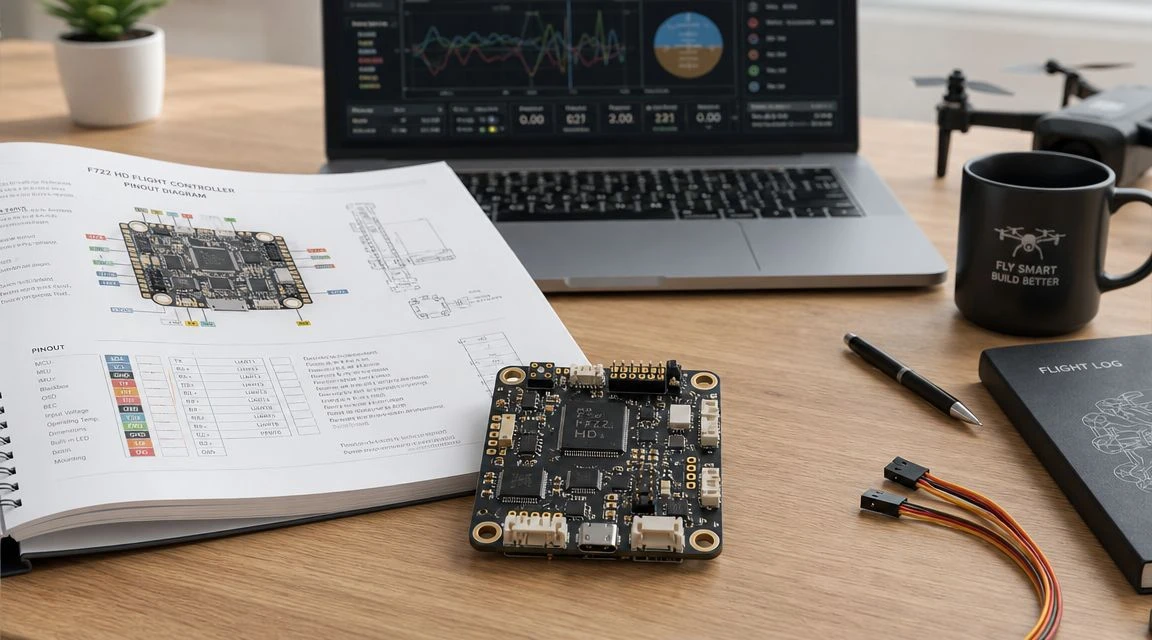

Understanding EU AI Act risk tiers: where your systems sit determines your obligations

What We Did About It

Once we understood where we stood, we worked through a structured compliance exercise. Here's what we've completed so far.

AI Governance and Acceptable Use Policy

We wrote a formal ISMS policy (GRI-ISMS-030) covering both our platform AI features and our internal use of AI tools. It defines roles and responsibilities, lists approved tools, sets out data protection rules for AI use, specifies prohibited uses, and includes an AI literacy framework for the team. It has a quarterly review cycle built in.

AI Systems Inventory

We built a register of every AI system in use across the business. Each entry is categorised (provider system, deployer system, embedded tool), risk-classified against the EU AI Act tiers, and documented with enough detail to show our reasoning. No high-risk systems were identified.

Risk Classification

For the flight risk assessment feature, we documented a detailed rationale for why it sits in the limited risk category despite being in an aviation context. The human oversight controls are the key factor, and we wanted that on record.

AI Content Disclosure Notices

Article 50 of the Act requires transparency when AI is used to generate certain types of content. We created two versions of a standard disclosure:

- A short version for content that's AI-assisted but substantially human-edited.

- A fuller version for content that's predominantly AI-generated.

You'll find the short version at the bottom of this post.

Information Security Overview update

We added a new section on AI governance to our client-facing security documentation. Enterprise clients and procurement teams asking about our AI use now get a clear, structured answer rather than a verbal explanation that varies conversation to conversation.

Trust Centre FAQs

We created eleven AI-related FAQs for our public Trust Centre covering the feature itself, how data is handled, how we classified the risk level, our compliance approach, team training, and how to request our governance documentation.

What We Haven't Done Yet

Transparency cuts both ways. Here's what's still outstanding.

AI literacy training for the team. Article 4 requires providers and deployers to ensure staff have sufficient AI literacy to understand the systems they use or oversee. With a small team, this isn't complex, but it's already past the February 2025 deadline. We're running the session imminently.

Vendor compliance letters to OpenAI and key tool providers, confirming their own compliance posture under the Act.

Insurance review with our broker. Most professional indemnity policies have what's sometimes called a "Silent AI" gap, meaning AI-related claims aren't explicitly covered or excluded. With the Product Liability Directive coming into force in December 2026, that gap needs to close before then.

A client-facing AI transparency summary and a dedicated website section on our AI governance approach.

We're being open about the gaps because we think that's more useful to other businesses than pretending we've got everything wrapped up.

What This Means For You

If your business uses AI tools and has any EU customers, the AI literacy obligation is already in effect. That applies whether your tool is a purpose-built AI system or something like Copilot embedded in Microsoft 365.

A few questions worth asking yourself now:

Do you know every AI system your team is using, including the ones embedded in your existing business tools? Do you know how they're classified under the Act? Has your team had any kind of structured AI literacy session? And if you're building AI into your own product, do you know whether that makes you a provider rather than a deployer?

Most UK businesses we speak to haven't asked any of these questions yet. That's not a criticism. The Act is genuinely complex, the timeline has been confusing, and there's been a lot of noise. But the first obligations are live, and the high-risk deadline in August 2026 will arrive faster than people expect.

Our Design Principle Going Forward

As we add AI features to Dronedesk over time (and we will), our guiding principle is augmentation, not automation. AI should make human operators better informed and more efficient. It shouldn't remove the human from decisions that matter.

That principle isn't just about compliance. It reflects how we think AI should work in safety-critical contexts. Drone operations involve real decisions with real consequences. AI can help surface risk information that a human might miss. But the pilot still assesses it, edits it if necessary, and decides what to do.

That's how the flight risk assessment feature works today. It's how any future AI features will be designed.

Requesting Our Documentation

If you're a client, prospective client, or procurement team assessing Dronedesk's AI governance, you can request copies of our AI Governance Policy, our AI Systems Inventory, and the relevant section of our Information Security Overview via our Trust Centre.

We believe in proactive transparency on security and compliance, not waiting to be asked.

Get in touch via our contact page or speak to your account manager.

How to Win More Work as a Drone Service Provider →

How to Win More Work as a Drone Service Provider → Your UAS Pilot Logbook: A Complete Guide for 2026 →

Your UAS Pilot Logbook: A Complete Guide for 2026 → Drone Control Software: What It Does and Who Needs It →

Drone Control Software: What It Does and Who Needs It → Flight Computer Manual: Master Drone Operations →

Flight Computer Manual: Master Drone Operations → UAV Software Guide for Flight Planning and Compliance →

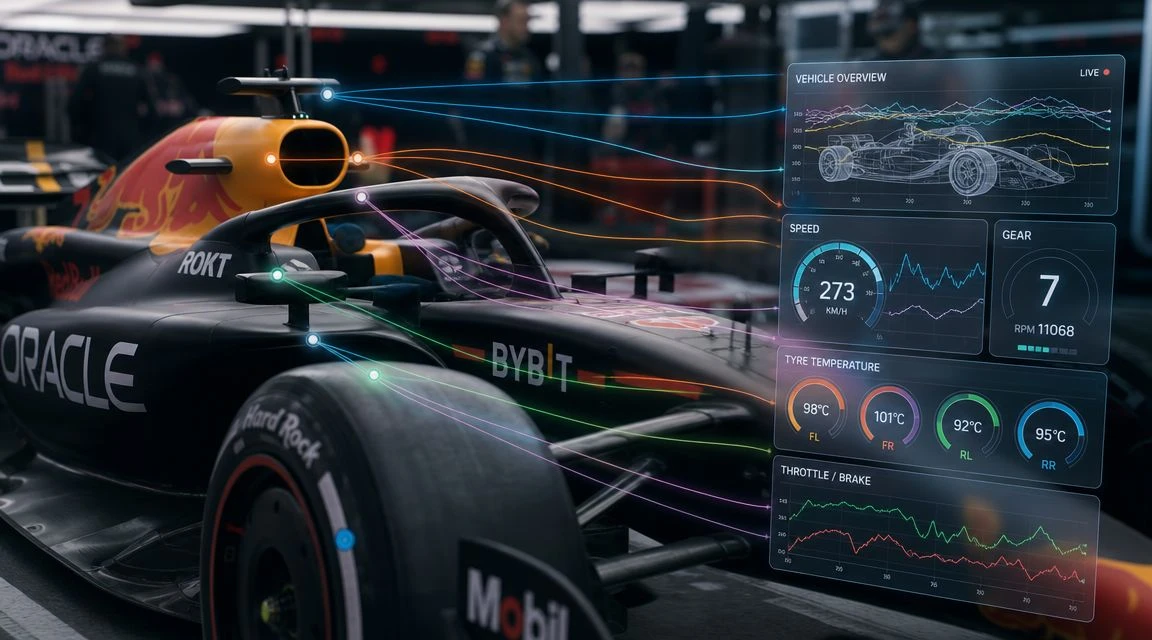

UAV Software Guide for Flight Planning and Compliance → F1 Telemetry Data Explained: From Sensor to Strategy →

F1 Telemetry Data Explained: From Sensor to Strategy → Drone Software Companies Compared for Commercial Ops →

Drone Software Companies Compared for Commercial Ops → What Makes a Great Drone Platform for Commercial Teams →

What Makes a Great Drone Platform for Commercial Teams → Your Guide to the Ultra Light Aircraft Licence in 2026 →

Your Guide to the Ultra Light Aircraft Licence in 2026 → UAVs for Sale: A Professional Buyer's Guide for 2026 →

UAVs for Sale: A Professional Buyer's Guide for 2026 →